Two Losses Make a Win: How a Physicist Surprised Mathematicians

Share

- Details

- Transcript

- Audio

- Downloads

- Extra Reading

One of the most extraordinary pieces of new mathematics was a discovery by the Spanish physicist Juan Parrondo, who was building a computer simulation of an effect in quantum theory. One has two simple coin-tossing games, each of which favours one's opponent. Suppose at each turn one chooses randomly which losing game to play. Astonishingly, this random switch between two unfavourable games can produce a favourable one. (For those who find this hard to believe, I will provide a demonstration!)

Parrondo's Paradox has been used to explain the behaviour of viruses, and offers investors the potential to reduce the risk in their portfolios.

Download Transcript

16 March 2015

Two Losses Make a Win: How a Physicist Surprised Mathematicians

Professor Tony Mann

Welcome to Gresham College, and as always a special welcome to anyone who is here for the very first time: we hope that you will make many more visits to this wonderful and historic institution.

This is my third and final lecture this term. In January I talked about what I regard as entertaining and profound paradoxes in logic, while last month my theme was a paradox in game theory, the Prisoners’ Dilemma, and its recent use in the study of co-operation. Today we are going to look at other paradoxes arising from games, and in particular at an astonishing example due to the Spanish physicist Juan Parrondo. The “games” we discuss tonight are generally games like chess, or card or coin-tossing games, rather than the more general “games” that last month’s talk covered. Tonight’s are not the kind of games analysed by John von Neumann and Oskar Morgenstern in their classic book Theory of Games and Economic Behaviour, which I mentioned last month, but they are mathematical games in the sense used by the pioneering mathematician David Blackwell, who wrote an influential book on such games in 1954.

But I thought I would begin with a topic which came up in questions at my February lecture. In a game, is it always best to play the best move possible? Can there be an advantage in doing something which is demonstrably not the best option? I’m not talking here about bluffs in games like poker, where misleading the other players is an essential part of the strategy, but about games in which there might appear to be no possible advantage in choosing an inferior move.

In some sports, mistakes can be productive. In cricket the batsman who has survived a tight spell of accurate bowling may hole out to the unexpected loose delivery. In rugby the poor pass which invites an interception attempt may draw a defender out of position and open the line to the attackers. And only last week, the former Australian cricket head coach Tim Neilsen said, “The best arm ball that any spinner can bowl is the one they try to spin, but it doesn't actually spin. If they don't know what is going to happen, how will the batsman know?” But in a fully determined game like chess or bridge, does it ever pay not to make what is objectively the best possible move?

As one of the audience at my last lecture said in asking a question, there may be occasions when chess players choose moves for psychological reasons. One might choose a rare opening in order to disconcert one’s opponent or to make them worry that you have a tactical innovation up your sleeve. On the other hand, the great chess player José Raúl Capablanca said, “When you sit down to play a game you should think only about the position, but not about the opponent. Whether chess is regarded as a science, or an art, or a sport, all the same psychology bears no relation to it and only stands in the way of real chess.” I recall (though I have been trace to find the quotation) that a great chess champion once criticised an opponent who he had just beaten. The opponent had played what he had mistakenly thought to be a very powerful move: the champion said that even if his opponent could not see anything wrong with the move, he should have trusted the strength of the stronger player. If the move had been as good as it looked, then the champion would never have allowed him the opportunity to play it, and he should have rejected the move. Stories like these seem to show that there is scope for bluff in chess, and that it is perhaps not always best to play the best available move, despite Capablanca’s views (and the very fact that Capablanca made that comment suggests that not everyone agreed with him!)

Sometimes one might wish to make a bad move to hide one’s true ability from one’s opponents – the dubious tactic of playing badly at low odds and then increasing the stake to win a large amount of money at the end of the evening is an example, even if it is sharp practice. And sometimes one might wish to sacrifice a game now to establish for the future that people cannot make assumptions about your plays.

For example, I was once playing a multi-player game with three students. One of the students was one move away from winning and it needed one of the other three to make a defensive move to stop them. I was the third of these three to play, and naturally the other two both made positive moves, ignoring the threat and enhancing their positions, leaving it to me to make the block (and to waste a move in doing so when I could have been advancing my own piece). I thought that if I blocked, I would lose eventually anyway because of the tempo I had lost in blocking, so I didn’t block, allowing the first player immediate victory. I hoped to reap a reward in future games because the other players wouldn’t dare assume that I would play logically in future, and that next time a similar situation arose, my reputation would mean that opponents would not leave me to be the one to block Did it work? I don’t know, because none of them ever played the game with me again!

Then there is the so-called “Grosvenor Coup” in bridge, for which there was a vogue a few years ago. Good bridge players try to find improvements in their card play – for example a different way to handle a situation which, depending on the lie of the cards, is sometimes better than the standard approach, but never worse. (This is clearly a good thing: it’s like choosing a dominant row in a game theory matrix, as we discussed last month.) The Grosvenor Coup is the reverse of this: one makes a play which might do worse than the normal one, but can never do better. Why on earth would one do that? The theory is that the opponents will never imagine that you might be doing something so stupid, so they won’t make the play that would take advantage, and they will be so disconcerted when they realise what you have done, that their concentration for the rest of the evening will suffer, to your advantage.

So we’ve seen a number of reasons why it might be best not to make what is objectively the best play. Let’s now move on to another paradox.

Many of the games we are interested in are necessarily finite games, in the sense that they are guaranteed to end within a finite number of moves. A hand of bridge starts with a bidding sequence involving at most 319 bids, if I’ve calculated correctly (which I may not have!) and then the playing of 52 cards: then it is over. A game of chess cannot go on for ever, because of the rule which says that it is drawn if 50 moves are made without a piece being taken or a pawn being moved, since there are only a limited number of pieces that can be taken, and each of the sixteen pawns can move only seven times at most, so after a finite number of moves there are no pieces left to be taken or pawns to be moved. In the game Hex, invented independently by Piet Hein and John Nash, two players alternately place counters on a hexagonal grid, attempting to form a chain of their own colour from one side of the board to the other. The game must end when every cell on the board contains a counter, so Hex too is finite. A game which is guaranteed to end within a finite number of moves is called a “finite game”.

In many of these games, like hex and chess, the first player seems to have an advantage. One way to reduce that advantage would be for one player to choose which game to play, allowing the second player to have first move in that game. Then, if I think I am better than you at chess but that you are better than me at hex, then I would choose chess over hex. This is the principle of the game “Hypergame”, invented by William S. Zwicker in 1986 to demonstrate a paradox, although similar games were discussed earlier by Raymond Smullyan and Martin Gardner.

Hypergame is a two-player game based on this idea. The first player can nominate any finite game of their choice. The two now play this game, with the second player in Hypergame taking the first move in the first player’s chosen game. Hypergame is clearly a finite game, because after the first move in which the first player chooses a finite game, we will be playing this finite game, and one move more than the number of moves in a finite game is necessarily still finitely many moves.

A month ago I introduced this game at the Maths Arcade we run at the University of Greenwich – which is an informal gathering of maths students and staff where we often play various strategy games. This is what happened when Stephanie and Hurkan played:

[VIDEO of students playing Hypergame]

Let’s just check out the logic. A game of Hypergame involves one move in which the first player chooses any finite game (let’s call it game X), and then continues with all the moves involved in playing game X. But since game X is necessarily finite, then the Hypergame must end in one turn more than game X, so that must also be a finite number of moves. So whichever finite game the first player chooses, Hypergame must end in a finite number of turns. So Hypergame is certainly a finite game.

But if Hypergame is a finite game, then Hypergame allows the first player (in this case Stephanie) to nominate any finite game. So it was perfectly legitimate for Stephanie to nominate Hypergame. In accordance with the rules, the players now play Stephanie’s nominated game – that is Hypergame – with the second player – Hurkan – making the first move. Hurkan can therefore choose any finite game he wishes – and since Hypergame is a finite game, Hurkan was fully entitled to nominate Hypergame. This meant Stephanie could nominate any finite game, and she chose Hypergame again, and so on.

So although we thought we had proved that Hypergame is a finite game, it appears that it can go on forever. This is in fact related to Russell’s set theory paradox, which I discussed in my January lecture. One can argue that if Hypergame is a finite game, then it is a legitimate choice for the first player in a game of Hypergame, and then for the opponent, and so on, in which case Hypergame can last infinitely long, so it is not a finite game. On the other hand, if Hypergame is not a finite game, then it is not a valid choice for the first player, in which case the counter-example doesn’t arise and Hypergame is necessarily finite after all. We see the analogy with Russell’s set of all sets that do not contain themselves..

So let’s move on. Let’s consider another two-player game which demonstrates unexpected behaviour. We’re going to toss a penny repeatedly. The coin is fair so it is equally likely to land heads or tails on each toss. Each of us will choose a possible sequence of three outcomes – for example you might choose Heads – Tails – Tails and I might choose Heads – Heads – Tails. The winner is the player whose chosen sequence comes up first in three consecutive tosses of the coin. So for example, if we toss the coin repeatedly and the sequence begins Tails – Heads – Tails – Heads – Tails – Tails – Heads, then you win because the fifth, sixth and seventh tosses formed your sequence and my sequence has not yet appeared. Incidentally, the probability that the game will go on for ever, with neither of our sequences ever coming up, is zero, so we don’t have to worry about it not finishing.

This game is called Penney Ante, not because it is played with pennies, but because it was invented by Walter Penney, who published it in 1969. I was introduced to it as a schoolboy when I went to an open day at the maths department at Edinburgh University, and I still remember the day! The interest in this game is as follows.

Being a generous fellow, I will allow you to choose your sequence first. That is obviously to your advantage, because you can choose the best sequence! Suppose for example that you choose the sequence Heads – Heads – Heads. I can’t choose the same sequence, so I might choose Tails – Heads – Heads instead. Who is likely to win?

Well, let’s suppose your sequence appears for the first time in tosses n, n+1 and n+2. What is the probability that my sequence came up earlier? Well, if n is one, so that the first three tosses were three heads, then you have won, and the probability of that is 1 in 8. If not, then there was a toss immediately before the nth toss – what happened in toss n-1? Well, it can’t have been another head, for if it was, then there was a sequence of three consecutive heads at tosses n-1, n, and n+1, but that contradicts our assumption that the first sequence of three heads began with toss n. So toss n-1 must have come up tails, in which case we had the sequence Tails – Heads – Heads immediately before your sequence, and I must have won the game, either with that sequence in tosses n-1, n, and n+1 or possibly with some earlier sequence of Tails – Heads – Heads.

So this shows that with these choices the only way that you can win is if the first three tosses are all heads – a 1 in 8 chance. Otherwise my Tails – Heads – Heads sequence is bound to come up before your Heads – Heads – Heads. So my careful selection of Tails – Heads – Heads has given me a seven in eight chance of winning.

So three Heads was a poor choice for you. Perhaps you should have chosen my Tails – Heads – Heads rather than leaving it for me. Well, if you had done so, then I would have chosen Tails – Tails – Heads, and it can be shown by a similar argument shows that my chance of winning is now 2/3. Indeed, whatever you choose, I can pick a sequence which gives me at least a 2/3 chance of winning. If you choose Tails – Heads – Tails then I choose Tails – Tails – Heads which wins two times in three. If you choose Tails – Tails – Heads then picking Heads – Tails – Tails means I win three times in four, and so on.

It seems counter-intuitive that the second player has such a high chance of winning. We are used to a property called transitivity. If I prefer chocolate brownie to cheesecake, and I prefer cheesecake to fruit salad, then you can naturally assume that I prefer chocolate brownie to fruit salad. Even although this doesn’t always work in real life – just because Arsenal beat Manchester City and Manchester City beat Spurs does not mean that Arsenal will necessarily beat Spurs – it is a natural way of thinking in many contexts. But Penney Ante is intransitive. With the obvious abbreviation, TTH has the advantage over THH. But THH expects to beat HHT. HHT beats HTT, and HTT beats TTH, completing the cycle.

You might think that it is understandable that such behaviour arises in games with a chance element, like Penney Ante and football. But it can happen even when there is no chance involved. Suppose we have some game in which the stronger player always beats the weaker – for the sake of our discussion we’ll assume that chess is like this. We might have three chess teams each consisting of three players, a total of nine players, and we can rank them, with player 1 being the best and player 9 the worst. Suppose team A comprises the players ranked 1, 5 and 9, team B has players ranked 2, 6 and 7 and team C has players ranked 3, 4 and 8. Then when team A plays team B, players 1 and 5 will win for A while 9 loses, so team A wins by 2 games to 1: therefore team A is better than team B. Team B will beat team C by 2 games to 1, with wins for players 2 and 7, so Team B is better than Team C. So if the situation were transitive, then team A would certainly beat team C. But in fact players 4 and 8 for C beat their counterparts in team A, and we find that team C beats team A!

Here is a curious conundrum which results from non-transitivity. Suppose I am an HR manager selecting a new employee for my company. I shortlist candidates A, B and C, and interview them to rank them according to three equally important criteria – perhaps industry experience, technical skills and communication ability. Following the interview, we have the following ranks:

Industry experience Technical skills Communication ability Best A B C Second best B C A Third best C A B

We see that it is hard to make a decision. The situation is perfectly symmetrical and no candidate is better or worse than any other. We have an impasse.

Then candidate C phones up to say that they are withdrawing their application because they have been offered another position. So we remove them from consideration, leaving this situation:

Industry experience Technical skills Communication ability Best A B A Second best B A B

Now, it is clear that A is a much better candidate than B – A outranks B in two of the three criteria. But when C was a candidate, we were unable to choose between the three. What is happening? Are we wrong in now preferring A to B? If not, how did C’s presence hide this superiority from us? Such are the difficulties of non-transitivity.

We come now to the paradox from which this lecture takes its title. We’re going to discuss certain carefully contrived coin-tossing games. In these games, I am playing against the bank, and the rule is simple: we toss a coin repeatedly, and every time it comes up heads, I win a penny from the bank, while every time it results in tails, I lose a penny to the bank. If the coin is unbiased – that is, it is equally likely to land heads or tails, then the game is fair, and in the long run I will expect to break even.

However in the games we are going to play, the coins are not unbiased. First I’m going to consider Game A, in which the coin is very slightly biased against me. We’ll say that the probability of heads is just under one half: being mathematicians, we’ll say that it is 0.5 minus epsilon, where epsilon is a very small positive number. In all the following examples I will take epsilon to be 0.005, so for game A my chance of winning each toss is 0.495. Theory suggests that, out of 1000 tosses, we would expect an average of 495 to come up heads, and 505 tails, so my expected result over 1000 rounds would be an average loss of 10 pence.

I have created an Excel spreadsheet to demonstrate this game over 10000 tosses.

[EXCEL DEMONSTRATION]

We see that although there is some random variation, over 10,000 rounds I generally lose between 50 and 200 pence. I’ve carried out much larger trials, simulating 100,000 sets of 1000 rounds of this game. Out of these 100,000 I came out ahead in 36,726, and my average loss was 9.892 pence per 1000 games, which is very much what one would expect. The bias is slight but enough to make me a consistent loser in the long run, even if I come out ahead in over one-third of the 1000-turn sequences.

We’re now going to introduce another game, Game B. This one is played with two coins. I start off with capital of zero, and again I win a penny every time my coin comes up heads, and lose a penny when it comes up tails. The first coin is heavily biased against me - it comes up heads with a probability of just under one tenth – that is, 0.1 minus epsilon – so on average I will lose just over nine times out of ten. But the second coin favours me – it comes up heads with probability just under three-quarters: 0.75 minus epsilon, so I win just under three quarters of the tosses. Which coin do I use for each toss? Well, if my capital – my total winnings so far – is a multiple of 3, I use the first coin, which gives me a very small chance of winning, while if my capital is not a multiple of 3, I use the second coin, and have almost a 3 in 4 chance of winning a penny.

What is the expected outcome of this game? It’s harder to calculate than for Game A. Roughly, with the first coin my expected loss for each toss narrowly exceeds 0.8 pence, while with the second coin my expected gain for each toss is a little less than 0.5 pence. However, because of the way in which the choice of coin depends on the current capital, it’s not easy to calculate how often, in a series of games, each coin will be used. Markov chain analysis can be used to find out for what proportion of tosses one would expect to use each coin, but I found it easier (and I have more confidence in my programming than in my mathematical ability), to estimate this by computer simulation. Again, I’ll show you what happens in Excel when we simulate 10,000 rounds: we generally lose.

[EXCEL DEMONSTRATION]

In my more extended simulation, playing 100,000 sequences of 1000 tosses gave me an average loss of 9.135 pence per sequence: I came out on top in 32,529 of the 100,000 sequences.

So on average if I play this game I expect to lose money, too. So both Game A and Game B are losing games for me: if I play them often enough I can be confident that I will end up with less money than I started with.

So what would you expect to happen if, instead of playing one of these games all the time, I were to choose randomly on each round which of the two games to play? That is, on each round, I toss another, this time perfectly fair, coin, and if it comes up heads I play Game A while if it comes up Tails, I play Game B? Well, the expected value of Game A is negative – I expect to lose 0.01 pennies per toss – and the expected value of Game B is also negative – from my simulation results, a loss of just under 0.01 pennies per toss too. So it seems that both games are just about equally disadvantageous for me, and you might expect that swapping between them at random would have fairly similar results to using either of them on its own. So once again, over a sequence of 10000 tosses, it would seem that I should expect to lose around 100 pence.

I’ve done this on Excel too – so let’s see what happens.

[EXCEL DEMONSTRATION]

We find that when I play 10,000 rounds I come out on top. Perhaps I was just very lucky.

Again, I carried out larger scale simulations of this new game, when one randomly switches between Game A and Game B. Over 100,000 sequences of 1000 tosses, my average loss was … well, in fact I made an average gain of 15.4 pence! I came out ahead, winning money from the bank, in 68.8% of the trials.

This is at first sight astonishing. Randomly switching between two games, both of which one expects to lose, has resulted in a game in which, two times out of three, one wins!

This seems to go against all our intuition, against everything we expect. Most (though apparently not all) mathematicians, when they first meet it, are astounded. In fact we don’t believe it. It is only after trying it for ourselves (by computer simulation over a large number of trials) that we accept that this is really happening. One of the beauties of software like Microsoft Excel is that it provides a quick way to try out things out. If you have access to a computer and are able to do so, I suggest you try it for yourself – it’s the best way to convince yourself that this effect is really happening!

What is going on? Well, basically, when we play game B on its own, we end up tossing the unfavourable coin just under 40% of the time, and our expected loss on these turns outweighs the expected profit from the 60% of the times when we toss the favourable coin. But when we choose randomly between Game A and Game B, on those occasions we play Game B, the unfavourable coin is selected only about 34% of the time, and then the losses we make from that coin are smaller than the gains we make on the 66% of the turns when we use the favourable coin.

It’s not just random switching between the games that can turn two losing games into a winning one. If one alternates two turns of Game A with two turns of Game B, the result is also a winning game. (However, playing one turn of each game alternately is a losing game.)

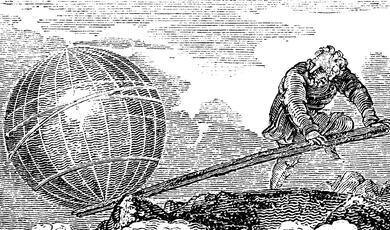

Before discussing the implications of this extraordinary piece of mathematics, I want to tell you how it was discovered. The quantum physicist Juan Parrondo was working on a concept in physics called a Brownian ratchet. This is a thought experiment, akin to Maxwell’s Demon, in which Brownian motion – the random motions of particles in a gas – can perhaps be exploited to create a motor. In the original idea, random buffeting by particles drives a vane which is connected to a ratchet wheel which can turn in only one direction, so those particles which happen to move randomly in one direction turn the wheel, while particles moving the other way have no effect. The result appears to be potentially a perpetual motion machine (although in practice that isn’t actually the case). The Brownian ratchet was discussed at the beginning of the 20th century and was popularised by Richard Feynman, and in fact Parrondo and his colleagues improved Feynman’s analysis in the 1990s. It was to create a model of the Brownian ratchet for teaching purposes that Parrondo created the games described above.

One model of the Brownian ratchet involves particles moving randomly on a surface which switches at regular intervals between being flat and saw-tooth shaped.

(Diagrams by Ambuj Saxena, from Wikimedia Commons, licensed under the Creative Commons Attribution-Share Alike 3.0 Unported license.)

A Brownian particle – think of it as a marble – in the first case will move around randomly: in the second case it will tend to fall downhill towards the bottom of a valley. Occasionally, during a flat period, a particle which starts at the foot of a valley will, through its Brownian motion, move randomly to the left so that, when the surface switches back to the saw-tooth, it will now find itself in the valley next to the left; but rarely will a particle move far enough to the right to enter the next valley to the right. Consequently over time all the particles will drift uphill to the left, and this happens even if the surface is tilted slightly so that one might expect all the marbles to tend to flow downhill to the right. There is an excellent animated simulation at https://elmer.unibas.ch/bm/ where one can observe this effect in action.

Parrondo invented the games we have discussed to demonstrate the Brownian ratchet effect to his students.

When Parrondo’s Paradox came to the notice of mathematicians through an article inNature in 1999, there was astonishment. This behaviour seemed completely unexpected and, frankly, unbelievable. But Parrondo himself didn’t expect this response: to him, the behaviour of the games was entirely natural, since he had created them precisely to model this kind of effect. He was surprised that the mathematicians found the outcome paradoxical. Nevertheless, the name “paradox” has stuck: this is a result which at first sight, to most mathematicians, is completely counter-intuitive.

So how can we take advantage of this paradox? One might wonder, can we use this to make money from gambling games? After all, typically casino games offer a small advantage to the bank (perhaps comparable to our epsilon parameter). Can we take two losing games in the casino and switch between them to bring the odds into our favour?

Well, sadly for us (unless we own casinos) the answer to that seems to be “no”. The rather special structure of the games we created above is not that of the games one plays in casinos. In fiction it may be done: in Richard Armstrong’s 2006 novel, God Doesn’t Shoot Craps: A Divine Comedy, it appears that the paradox can be used by gamblers to beat the casino’s odds, but, such fiction notwithstanding, Parrondo’s paradox doesn’t seem to offer us a way to beat the bank at Monte Carlo.

Perhaps more plausible, although as far as I know, yet to be implemented successfully, is that the paradox might offer a way to mitigate risk in the financial sector. The Parrondo effect works by combining losing games to reduce the adverse effect of the first coin in Game B. Is it possible that we might be able to combine risky investments in this kind of way, to reduce our exposure to occasional adverse results? It seems arguably slightly more likely that we could exploit this effect in mitigating investment risks than in winning in Las Vegas. There are analogies between Parrondo’s Paradox and the older concept of the “volatility pump”, an investment strategy in which capital is divided between two risky investments in such a way that the split is always equal, which supposedly turns short-term losses into long-term gains, but to the best of my knowledge it has yet to be demonstrated that Parrondo’s paradox can make you rich.

So does this paradox have any real-world application? Well, it is still very new, but researchers in a number of fields are looking for, and finding, apparent cases of the Parrondo effect. It has been used to explain the behaviour of a virus which cannot survive at either low temperature or high temperature, but which does survive if high and low temperatures alternate: from the point of view of that virus, Parrondo’s paradox is essential to life! And the paradox has recently been applied in computational models of chemical systems, where oscillating conditions produced greater concentrations of the product than static conditions, with implications in biochemistry and chemical engineering. So this paradox is having an influence well beyond the world of mathematical games.

So in this lecture I have presented a number of counter-intuitive effects that are found in mathematical games, showing some of the rich possibilities the subject offers and how it can still surprise us. I’ve also tried to show how helpful computers are in understanding mathematical ideas and how simulation can verify surprising theoretical predictions. But before I finish, I would like to go back to the University of Greenwich Maths Arcade, though our live video link, to see how Hurkan and Stephanie are getting on with Hypergame.

[“LIVE VIDEO LINK” from Greenwich]

Well, it seems that might go on for some time, so I’d like to thank Stephanie Rouse and Hurkan Suleyman for giving up the rest of their lives to play Hypergame, and Rosie Wogan for filming and editing the videos. (I should probably make it clear that that last video was in fact pre-recorded and I haven’t kept Stephanie and Hurkan playing for several weeks.)

This is the last of my Gresham College lectures, and there are a lot of people I wish to thank. I’m grateful to friends, colleagues, students and members of the audience who have encouraged me, suggested ideas and helped me develop the lectures. The people at Gresham College have been amazing: Valerie, Dawn and all the staff have been unfailingly supportive and have ensured everything ran smoothly, Chloe has produced transcripts uncomplainingly however late I supply the copy, and James, Alex and Richard have coped with my unreasonable technical demands and have made everything work both in the lecture room and on the web. But my warmest thanks are to you. I have been very lucky to have had such wonderful audiences, both here at Barnard’s Inn Hall and on the web. One of the lovely, although daunting, things about Gresham College is that the audience is remarkably varied: there have been people here who know much more than I do, and people to whom the subject matter is new but who bring great intelligence and curiosity. You have tolerated my errors and asked insightful and provocative questions which have given me much to think about, and I am very grateful to you all.

© Professor Tony Mann, 2015

Part of:

This event was on Mon, 16 Mar 2015

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login