What the universe is made of

Share

- Details

- Transcript

- Audio

- Downloads

- Extra Reading

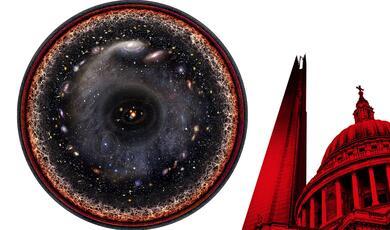

Three simple principles enable us to understand the whole range of structures that we see in the universe. 1. We will consider the importance of 'size' in determining what can happen to a piece of matter in the universe and whether it can come alive. 2. We look at the problem of determining what the universe is made of, distinguishing luminous matter from dark matter and dark energy. 3. This will lead us to investigate what these dark sides of the universe might be and whether we can identify them in the future.

Download Transcript

WHAT THE UNIVERSE IF MADE OF

Professor John D Barrow

Suppose we were to commission a survey and take a collection of graduate students, or members of the House of Lords, or whoever you want to use for this type of work, and decide to send them out to produce an inventory, a catalogue, of everything that you can find in the universe, with a view to identifying what are the distinctive types of object that you come across that the world is made of. Then produce a display shown as a graph in logarithmic scale. At zero would be something that has a mass of one gram and has a size of one centimetre. There’s a vast span of objects going from atoms, for example – down at about ten to the minus eight of a centimetre, going up to very large molecules, simple living things like bacteria and insects and spiders and so forth. If we go on up to space based objects like asteroids and comets, they’re about ten to the fifteen grams in size, about the size of a big mountain, and then planets like the Earth, ten to the twenty-seven grams, go about a thousand times bigger, you have a planet like Jupiter, a great ball of gas and liquid hydrogen, if you go a million times bigger than the Earth, ten to the thirty-three grams, you’re up to the size of a typical star like the Sun. Go beyond single, relative solid objects, like stars, to collections of objects – galaxies contain maybe a hundred billion stars like the Sun.

Now, what’s odd about this picture is that you might have imagined, if you were doing your data handling course at school, that somehow this picture would be populated pretty much at random, and every nook and cranny of the picture would have distinctive objects in it, but the picture isn’t like that at all. Most of the things seem to cluster along a straightish line and in some places there’s nothing at all. Why do the things in the universe fill out a rather well defined strip in the picture and nowhere else? We can understand this and therefore pretty much the distribution of everything in the universe, with just three simple principles which will allow us to draw three straight lines on the picture.

Consider a particular constraint on what you might see in the picture, which I call visibility. Visibility is a very simple idea. It’s the idea of the black hole that we talked about in a whole lecture in the last series. But if you have a spherical object of radius “R” and you cram an amount of mass “N” into that sphere, if you cram too much mass into that sphere, then the gravitational attraction at the surface of the sphere will be so great that light cannot escape from it and get all the way to infinity. So this concept we call the escape velocity. In the case of the Earth, the escape speed is about eleven kilometres per second at the surface, so that’s what you need if you want your rocket to escape the Earth’s ball and not crash back into the sea but to make it out to the Moon or wherever it’s destined to go. Now this escape speed depends on the strength of gravity – Newton ’s constant G – it depends on the mass, and it depends on the radius of the planet from which you’re trying to escape.

Back in the late 1700s, a British geophysicist, John Mitchell, first asked the question what would happen if you had an object in space that was sufficiently dense, it had such a large amount of mass crammed in a small radius R that light couldn’t escape from the surface, and he recognised that you wouldn’t be able to see this in the normal sense. Light would not be able to escape from it and reach our telescopes. But if it was bound by its gravity to another visible object, we might detect its presence because the visible object would be moving as if it were orbiting an invisible dark piece of space. This was a rather Newtonian description of what eventually became the concept of a black hole.

What you can see is that if you require that this escape speed never exceed the speed of light, we come across a simple condition that regions with sizes less than two times G times their mass over speed of light squared will be unobservable. They will be black holes. Light cannot escape from them, and we wouldn’t see them on our picture. So we’ll draw that line.

The second line we can draw derives from quantum uncertainty. Quantum theory teaches us that everything that has mass, that has energy, has a wave-like quality, so everything we think about as though it were a point really has a wave-like quality. This wave-like quality is not a wave in the sense of being a water wave; it’s more like a crime wave, in that it’s a wave of information. You see, if a crime wave hits your neighbourhood, it means simply that you’re more likely to find a crime committed there than if the crime wave doesn’t hit your neighbourhood. So it’s a statement about probability, about information. If an electron wave hits your detector in the laboratory, this means, in a very well defined sense, you are more likely to detect the signal that we call in an electron in that detector.

The important thing is that there is one formula of physics that everybody knows – E = MC 2 – so if you have a mass M, then it’s equivalent to an energy given by its mass times the speed of light squared. But Max Planck found there was a very simple formula that tells you the wave-like quality of that mass is given by equating its energy to a new constant of nature, Planck’s Constant, times the frequency of the wave, or the Planck’s Constant times the speed divided by its wave length. So you’ll see here that the wavelength of a mass becomes smaller as the mass gets larger, and that wavelength is the distance over which the concept of this mass as a point is rather fuzzy. So if a real object has a physical size which is bigger than its quantum size, which is rather like this projector or you and me, we have well defined sizes and positions, and to talk about how we move around, you don’t have to worry about quantum mechanics. But if you’re a very small thing, such that your physical size is smaller than your quantum wavelength, then you are a rather fuzzy and ill-defined thing; you are wave-like and uncertain in location – you’re quantum mechanical. So by putting that consideration together, you can see that objects whose physical size is less than their quantum wavelength are unobservable as single well defined solid objects.

So again, if we go back to our picture, we will again delineate a region of things that we wouldn’t identify and claim to be things or points or objects.

Well, the last line is one that’s got most symbols and equations. You don’t really need to worry what the symbols are, but just the fact that the symbols appear. If we want to understand what is the density of all the things that are around us, like ourselves, everything has got pretty much the same density – this pointer, me, the table, the transparencies, the paper. We don’t in everyday life come across things that are millions of times denser than water or sugar or ourselves, and there’s a simple reason for that. All the things I have been mentioning are made of atoms, and those atoms are closely packed together, and the average density of these things is simply the density of a single atom. So your density is roughly that of a single atom, the molecule of which you are made, and density is just mass divided by volume, so you can work out what the density of a typical atom is by just dividing its mass by its volume. Well, most of the mass of an atom is in the form of the protons and neutrons in its nucleus, and its volume is given by its radius, and the only thing you need to know about the radius is that it’s determined by constants of nature – things like the charge on an electron, and the mass of an electron.

So the punch line is simply that the density of atoms, and therefore of things made of atoms, is not something that depends very much on the temperature or the environment. It doesn’t really depend on anything, except constants of nature, things that don’t change, and if you put your numbers in, you’ll get a value of roughly about a gram per centimetre cubed, about the density of water. So this is why we all roughly have the same consistency and density we do. We’re the density of single atoms. Obviously, there’s a little variation. If something is made of iron, it’s a little bit more dense than something that’s made of hydrogen or calcium. But the fact that all objects made of solid material, atoms have a constant density, means that their mass is proportional to their volume. Their mass is proportional to their size cubed. So here is another line that’s important to us.

So, of we go back to our original picture and draw our 3 lines we will see what we can now understand about things. I’ve drawn the black hole line – R = 2GM over C 2. So black holes are odd, their mass is proportional to their size, not to their size cubed. They’re not solid objects. So everywhere in the top triangle of the picture would not be observable because it would be inside black holes, so we would not see any of that region. Then there is a region that’s shrouded by quantum mechanical uncertainty. Everything down in this triangle has the property that if you try to observe it, make a measurement of it, the act of measuring it would perturb into this region. So we can’t see anything down here. It’s intrinsically quantum mechanical. It doesn’t behave as though it’s a point or a solid object. One band says things have constant atomic density. So atoms, molecules made of atoms, bacteria, insects, plants, people, trees, these things are all made of atoms, all the way up to stars, which have the same density also as atomic structures on the average. This is why everything in the picture sits along that straight band. Once you get off the top, deviating away from that line, galaxies are not solid objects. They’re collections of stars which are orbiting in which the gravitational pull is balanced by the centrifugal effect of their motion and rotation. These are not solid objects; that’s why they deviate very slightly.

This gives you, I hope, some understanding of the broad brush structure of the universe. It may appear very diverse and complicated, but if you look at it in the right way, things are relatively well defined. We’ve identified three lines – the black hole line, the quantum line, the atomic density line – we’ve learnt that masses and densities of things are determined by constants of nature. In fact, the location of everything in the picture is fixed by the values of constants of nature. So things don’t appear because of some process of selection or random outcomes. Why planets are the sizes that they are is because if you want to have an equilibrium of gravity pushing inwards and sub-atomic forces pushing outwards, planetary sizes are the places and the regions where that equilibrium exists. Similarly for a star; a star is simply an object whose central pressure is so great that it’s producing temperatures high enough to initiate nuclear reactions. That requires a star to be relatively large, much larger than planets. The planet Jupiter nearly became a star. If it had been a little bit bigger, the central temperatures would be high enough to ignite spontaneous nuclear reactions. So all the objects are there because they are balancing acts between pairs of forces of nature.

Let’s move on and ask some broader questions about the make-up of the universe, and there are many questions you can ask. Let me pick one about the nature of matter and anti-matter. Why is the universe lopsided? What do I mean by lopsided? In the laboratory, in every particle physics experiment we pretty much make particles and their anti-particles, say positrons and electrons, protons, anti-protons, with equal profusion. So there is almost, but not quite, a complete symmetry between matter and anti-matter.

However, when you look out in space, in the universe at large, we don’t see any anti-planets, we don’t see any anti-stars, we don’t see any anti-galaxies. With one exception I’ll mention in a moment, we don’t see any anti-matter at all in the universe at large. If the Moon was made of anti-matter, then when the Apollo probe landed on it, it would have vaporised. If Jupiter and the outer planets were made of anti-matter, then the wind of charged particles that leaves the surface of the Sun, so called solar wind, when they impinged upon the outer planets, they would make them brilliant sources of high energy particles, so we know the outer planets are not made of anti-matter. So it’s really rather peculiar at first sight. Where is all the anti-matter? There are no anti-supernovae bombarding us with anti-particles in cosmic rays.

The only anti-particles we ever detect on Earth from space are not really very interesting. They are simply anti-protons, so anti-hydrogen nuclei, and there’s about one of them for every ten thousand ordinary protons. They’re not, alas, coming from anti-supernovae or anti-exploding stars. All that’s happening is that two ordinary protons come in to the top of the Earth’s atmosphere and they interact and shower as a result with parts of the Earth’s atmosphere. So two protons produce a P-Bar, an anti-proton, and three protons; these two annihilate and you have two to make up the balance. These are so-called secondary anti-protons; they’re just being made in the upper atmosphere.

For a long time, until the mid-1970s, this was a big problem for cosmology and astrophysics as a whole. At first sight, people would have expected the universe to be completely symmetric between matter and anti-matter, but that’s clearly bad news. It means there’s lots of annihilation, lots of destruction of matter and anti-matter. If you do the calculation, you have a special datum to help you. All over our universe today, you can ask what’s the average number of protons or atoms per photon of light? This is a universal number, there’s roughly about a billion photons for every proton in the universe; it’s quite a large number. So you could work out this number for a light bulb, or even for yourself., you can calculate how many protons there are in your body, you’re like a black body radiator that’s radiating heat at your body temperature, so you can work out how many photons are in that radiation process.

What this means is that the universe must have begun in such a way that there was a little lopsidedness. There was one plus a billionth protons for every one anti-proton, and all the anti-protons killed off their counterparts amongst the protons, leaving just one proton remaining for every, roughly, ten to the nine photons produced by the annihilation. This is a very odd prescription. It turns out that if the universe had been set up with equal numbers of protons and anti-protons, this number comes out at ten to the minus nineteen. You end up with ten to the nineteen photons per proton. Essentially everything is annihilated is what that’s saying.

This was a great puzzle, until the mid 1970s, when research into the nature of the fundamental forces made a great discovery, and that is that the different forces of nature are not as different as they might appear. If you look at the relative strengths of different forces of nature, like radioactivity, electromagnetism, nuclear forces, and you see how they behave as the temperature of your environment increases, then the effective strength of those forces changes as you go to a higher and higher temperature environment because of the particles and effects that are created as the temperature rises. In fact, what’s happening is, if you imagine that you have two billiard balls that interact here with a certain strength, then imagine that the temperature of the environment increasing is like covering them with rather woolly coats so that they’re shrouded in rather softer materials, so that when they now interact, they do so more weakly. The effect of quantum mechanics and increasing temperature is to do this to the particles of nature, like electrons and positrons and neutrinos, and so the strength with which they hit one another changes as the temperature rises, and it changes in a remarkable way. The forces of nature which are very, very different in our everyday world today at low temperature become increasingly similar in strength as we go back to very high temperatures of the sort that would be experienced in the early universe. This is the source of what people call the idea of grand unification, that all the elementary particles of nature are linked together, that the strong and the weak and the electromagnetic forces are just different aspects of the same force. If this is true, every type of elementary particle must be able to talk to or interact with every other part.

That was a completely new idea. Before 1975, the particles of which protons are made, triplets of quarks were not believed to be able to turn into particles like electrons or neutrinos, the particles that are called leptons. If they had been able to turn into leptons, it would mean that the balance between matter and anti-matter would not be preserved. So until 1975, most physicists believed that if you had a system of particles and anti-particles, then although you could change particles into anti-particles, if at the end of the day you added up the score, plus one for particles, minus one for anti-particles, the score has to be the same when you take the total, no matter what’s happened. We say that the matter/anti-matter asymmetry was a conserved quantity, and if the universe was lopsided today, it had to have been made in a lopsided way back at the beginning. It couldn’t begin with perfect symmetry and then evolve into a lopsided matter-dominated universe. But in 1975, this idea of grand unification required that quarks and leptons be able to transmute one into the other, and this balance between matter and anti-matter was no longer believed to be a conserved quantity. This led to some remarkable discoveries. It turned out that no matter how the universe began, no matter how lopsided or symmetrical it was, quarks and their anti-particles would decay at different rates and always leave the universe with a very particular lopsidedness that seemed to be very, very close to the value that we saw. All of a sudden, this mysterious property of the universe, why it was lopsided, was resolved.

One of the consequences of this theory which is rather interesting is that as people like to say protons are not forever, because ordinary protons and atoms are made of triplets of quarks and if this theory is correct, then pairs of those quarks can turn into positrons and anti-quarks, and the result is that the proton decays into a Psion, positron, and then ultimately into a light in a positron. So all atoms are unstable, if this unification process is true. Fortunately, the timescale for instability is very, very long. For an individual atom it’s much longer than ten to the thirty-three years. The age of the universe is ten to the ten years, but of course if you get a lot of protons together, this can still be an observable rate. It corresponds to just a bit less than one atom in your body decaying in your lifetime by this process. So if you get thousands of tons of atoms together and monitor them, you might hope one day to be able to observe this process.

So we understand why the bulk of the universe is indeed in the form of matter, that it’s not equally spread between matter and anti-matter. The biggest problem about that matter that remains is that a lot of it is not visible. Astronomers have been confronted for the last 20 years or so in a serious way with the problem of the difference between what they like to call mass and light, so the fact that when you look through your telescope or with your satellite receiver, what you see in general is light. It may be optical light from stars, it may be x-ray light, it may gamma ray light, but you don’t see mass, you just see light, so what you’re seeing in the universe is biased in a way. You’re seeing the things that are hot and energetic, which are beaming out radiation to you.

In the 19 th Century, this bias was recognised by Simon Newcomb, one of the pioneers of many aspects of science, who was really rather frustrated by astronomers’ emphasis upon thinking about the stars and focusing only upon the shining stars. He gave a wonderful example, that if at any one moment, you were to take a census of all the horseshoes on Earth, and you counted only the ones that were red-hot, having been removed from the fire, you would get a severe under-estimation of how many horseshoes there were on earth, and he regarded just counting the shining stars as being a similar bias.

The point is that stars are things that have formed only at the places in the universe where the density is really very high, where matter collapses under gravity and becomes dense enough for nuclear reactions to turn on, and for the lights to shine in the dark.

I’ve got a better example, I think, than Mr Newcomb. A picture which is a satellite created image of the Earth at night. It’s a montage of course, you can’t take a picture of everywhere at once, but it’s a picture of the Earth at night, and you see the characteristics. There are huge areas of darkness, and all the lights are on, you know, at places like your offices and New York and London and Frankfurt and Tokyo and so on. This is a very graphic image that mass does not trace light. You see, most of the mass of the human population is in these great dark regions of China and Africa and India . The light does not trace reliably where the mass of humanity is. The light traces the money, rather than the mass.

Similarly, in astronomy we have this problem of trying to figure out what’s the distribution of the mass, not just that of the light. We can exploit one important general principle. If you see luminous things moving around, then the speed with which they move and the radius of their orbits tells you something about the strength of gravity which they’re responding to. It doesn’t matter whether you can see the things that are generating the gravity, you’ll be able to deduce how much mass is present simply by looking at the motions of the moveable luminous objects. In the case of the solar system, if for some reason you were an extraterrestrial that didn’t have a vision system that allowed you to see the Sun, but you could see the Earth’s orbit in the solar system, then by measuring the radius of the orbit, the speed of the orbit or the time it took to complete, you could deduce the mass of the Sun, even though you couldn’t see it.

Pretty much everywhere in astronomy that you look, there is a rule of thumb which you can make as accurate as you wish, that when things move around, their kinetic energy of motion, velocity squared, is in balance with the gravitational potential energy of the gravitational force that’s controlling them. This leads to a formula that the speed squared depends on Newton’s Constant G, the mass that’s creating the gravitational field divided by the size of the region in which the moving object is orbiting. So what you aim to do is to measure these speeds over distant distances R, and you know R, so you can deduce M. You can deduce the amount of mass that there must be interior to the radius R. This is a game that’s played all over astronomy. It can be shown in a schematic picture. In the case of galaxies like the Milky Way, there are flat, so called spiral galaxies, which look rather like a fried egg, We would be located just outside the yolk, as it were, in the disc of the galaxy, and all the stars in the Milky Way would be going around the centre in circular orbits with a speed that depends on how far away from the centre they were.

This is what you get if you make observations of distant galaxies. You look at the stars which would be coming towards you on one side and the stars that would be going away on the other side. You will see a blue shift, a Doppler shift, of the light coming towards you from stars on one side, and you will see a red shift of stars going round away from you on the other side. This red shift is much smaller than the cosmological red shift, but it’s relatively easy to measure it with very, very high precision, to five or six decimal places. What you see in all the spiral galaxies that you look at is a rough velocity curve that has this structure. It increases steadily proportional to R and then it becomes flat, and it keeps on becoming flat until you can’t see the galaxy anymore. No one has ever seen the curve drop down, the galaxy becomes too faint before you detect the edge of a galaxy. What’s remarkable about these observations is that the ordinary optical or photographic image of a galaxy would end but we can by, using radio observations of 21 centimetre radiation, observe the rotation of atoms of hydrogen in the outer reaches of the galaxy, where you can’t see anything optically. What that shows you is that the galaxy actually goes on, it’s ten times bigger than those fantastic photographs that you see in the astronomy magazines, so the average galaxy is ten times bigger than that photographic image. So the photographic image is just like the lights on the map of the Earth at night. It’s the place where the density is high enough to turn the lights on, but if you go beyond that, there’s still plenty of galaxy, and in fact, the bulk of the mass of the galaxy is in these outer regions, and there is ten times as much mass in a typical galaxy than there is in the luminous part of it.

I have drawn a rather idealised picture of a few real rotation curves. They all have little bumps on them because the galaxies have spiral arms and so on, but here’s the velocity, they all tend to go round at about 250 kilometres per second, and here’s the distance from the centre in thousands of light years. You see this characteristic rise, and then this very long flat peak going out, galaxies of different sizes here. So there are hundreds and hundreds of rotation curves of this general form.

So this is the way in which we can determine how much mass there is in galaxies as opposed to how much light. The general rule of thumb is there’s about ten times as much mass as there is luminous mass, so most of it is dark.

We can now move on and think about clusters of galaxies. There are probably about a thousand galaxies, so-called rich cluster of galaxies. Clusters of galaxies are like a little swarm of bees, but the bees on our galaxies, they’re moving around at random but they’re held together by the mutual gravitational attraction of those galaxies. The great puzzle, known for 70 years, is that if you add up all the luminous galaxies, and deduce their masses, their gravitational pull is ten times too small to hold the galaxy cluster together, and it should have dispersed. So we conclude that there must be about ten times as much material here in this cluster, in dark form between the galaxies, including the dark stuff in the galaxies themselves, in order to explain why the cluster holds together and exists like a cluster.

Of the great predictions of Einstein, I think there are really two that remain untested. One is the detection directly of gravitational radiation. The other is his prediction that gravitational lenses should exist in the universe. This he predicted in 1907, even before the creation of the general theory of relativity.

If you look at a picture of a cluster of galaxies, there are unusual red strips moving around in arcs. What one is seeing is the effect of gravity on the cluster and galaxies in the foreground. They are focusing the light and producing multiple distorted images of the galaxies in the foreground. What this allows you to do, by looking at the multiple images, is to deduce how much gravity and how much matter there is producing that lensing. Suppose we are standing over here and we are looking in this direction and in between us there is a very massive astronomical object. We might be able to see it, so it might be a cluster, but it might be completely invisible, it might be a black hole. What happens is that gravity attracts the light rays, it distorts the light beams, focuses the light, and you can see what you get. You’re going to get an image produced by focusing, and you’re going to get two virtual images, which will be identical in ideal conditions. In practice, they’re not quite identical because there may be other stuff in the light paths and they may be different distances.

You can striated blue images that we saw all the way round are the multiple images of the objects within the cluster, and they’re stretched out into long caustic strips. So this phenomenon of gravitational lensing is seen all over the universe. It gives us another way to determine how much gravitating material there is around in a region, whether or not you can see it, so you’re able to measure the gravitational force, the gravitational potential, irrespective of the nature of the stuff that’s creating it.

In the last few years, the so-called T2DF Survey, the 2 degree field, has probed out nearly ten times further. You can look at two separate directions, and you can see a vastly greater number of objects in this survey, so this is the total number of galaxies you’re looking at, and when you go to a far greater distance, things start to begin to average out, and you begin to see the structure and distribution of objects turning into a fairly random, uninteresting type of distribution. The farther you go away, the more the structure thins out. So the structure of clustering into galaxies, clusters of clusters, clusters of clusters of clusters, it doesn’t go on for ever; there is a sort of maximum clustering scale in the universe.

There are two sorts of universe, that which gets launched with a speed bigger than the escape speed that I mentioned earlier, and that will go on expanding forever, and the one that has a smaller speed, which eventually, rather claustrophobically, heads back to a big crunch. In between is our British compromise universe, which just manages to make it to infinity. The key thing that separates these universes is the density of material they contain. There is a critical density which, if it’s exceeded, you are in this closed claustrophobic universe, whereas if this density is not achieved, you’re in this more agoraphobic ever-expanding universe. So it’s interesting to know whether we manage to do that. The critical density is really tiny, if you use units of grams per cc, it doesn’t mean very much to the ordinary person in the street, nine times ten to the minus thirty, but it corresponds to about one hydrogen atom in every five cubic metres, on the average. This is a far better vacuum than you ever could make it on Earth. The universe is essentially just nothing. It’s just empty space. But of course, in practice, matter isn’t evenly distributed. It’s lumped into galaxies and stars and planets and people, so it’s not a trivial task to try to decide whether our universe has more or less than this density. We are always going to be confronted with this problem that such a large amount of the matter, the vast bulk of it, is in a non-luminous dark form.

One thing that we might like to do is to try to figure out whether the dark material is in some unusual form. Is it just ordinary stuff like us that’s not shining in the dark, or is it some completely new type of material? Cosmologists have a very good way of probing this. In one of my earlier lectures, I talked about how if you follow the expanding universe backwards in time, when it’s about a second to a few minutes old, it’s hot enough and dense enough for nuclear reactions to occur everywhere, and you can predict very precisely what results from those nuclear reactions, you can go out and check if those abundances of very light elements are around in the universe, and indeed they are. The elements we are interested in are Helium 3, Lithium 7 and dutiriums. A dutirium is one proton with a neutron nucleus E and three, two protons and a neutron, and Lithium 7, 4 protons and 3 neutrons.

Now, what’s shown here is what is the predicted abundance from the nuclear reactions in the early universe of these elements. How does it depend on the density of ordinary atomic material, the type of material that takes part in nuclear reactions? We can draw this as a fraction of that critical density we were just talking about. The interesting thing is that if the density lies in a little band, we get a perfect agreement with the observed abundances of three elements, all at the same time. So what that’s telling you is that the amount of ordinary matter, the sort you and I are made of in the universe, falls well short of the critical density. It’s not even a tenth of the critical density. It’s about four or five percent of the critical density. Notice the way the graphs go. If somebody comes along and says, oh I think all the missing dark material in the universe, it’s just dead stars, or dark planets, and there’s a hundred percent of the critical density in that material, ten times more than you can see. What would happen is that we would predict that the abundance of dutirium would be essentially zero, ten to the minus twelve, instead of what’s observed everywhere, ten minus five; lithium you could just about get away with, the helium 3 again would be down, so you would be in trouble. So you can’t just hide material away in simple familiar forms.

If we take an inventory, what do things look like? Taking into account all these places of looking, about 23 percent of the material in the universe is in an unknown dark form. The luminous material forms about four percent of the whole. The radiation you can pretty much forget about, there’s almost none of it, one percent of one percent or so, but the biggest problem of all is that 73 percent of the material in the universe is not of this simple dark form that’s orbiting around in galaxies. What is it? In the last few years, astronomers have been much exercised by trying to understand what that material might be, and it’s come to be known as dark energy. So we have two problems. What’s the dark matter and what’s this other 73 percent?

Two of the candidates people have explored for the so-called dark matter problem go by the rather graphic names of WIMPs or MCHOs. MCHOs is an acronym for Massive Compact Halo Objects, and WIMPs are Weakly Interacting Massive Particles. WIMPs are interesting to physicists more generally. The idea is that we might expect new types of elementary particle, neutrino-like particles, to exist in profusion in the universe. Because they’re neutrino-like, they don’t take part in nuclear interactions and they won’t affect the abundances of lithium and dutirium and helium 3. Sometimes this type of matter goes by the name of cold dark matter. MCHOs, ordinary matter that’s just dark, there can’t be very much of that. It’s not a super-duper bulk of the universe missing matter, there’s some of it about and recently there have been some interesting ways of discovering it. If you imagine in our galaxy, you look out to the outer reaches, and you look towards an ordinary star, and you monitor the brightness of the star, and every so often you notice the brightness changes rather significantly. Perhaps it becomes brighter and then it returns to becoming normal. This can happen because a dark object moves across the face of it in between us and the object, and when it’s in front of the star, we get this lensing effect, so the light rays from the star get enhanced and amplified, they get focused, and then the dark object moves on past and the image returns to how it was. We see many, many of these objects. So we see a characteristic ordinary field of stars, and then a brightening as an object goes past. So we have a way of keeping a control on those sorts of rather more unexciting candidates for the dark matter.

One of the great searches in cosmology and particle physics at the moment is to try to detect these WIMP-like particles directly if they exist. So if the dark matter in the universe is made of new types of neutrino-like particle, perhaps with a mass 100 times greater than that of a proton, how would we go about detecting them? They’re all around us at the moment, they’re moving through this room, there are millions going through your head at the moment, they’re moving at about 250 kilometres per second, and there are about 400 of them in every cubic centimetre, but they interact so weakly that you’re still awake. But what you can do here is think about some very simple physics, that one of these very heavy neutrino-like particles, if it were to come in and hit a tiny piece of silicon, what it will do, just like a billiard ball, it will hit a nucleus in the silicon, and cause it to recoil. Tiny effect, but the recoil energy gets confined to the crystal and results in a slight heating of the crystal, maybe just by a mini-Calvin degree. If you build a big enough detector like this, hundreds of tons, silicon and other materials, you have a very good chance of detecting some of these particles when they pass through the Earth. So in order to detect the density of these particles great enough to explain the dark non-luminous matter in our galaxy, you really are looking to detect a few of these interactions per day in a detector that’s a kilogram in mass. That may require several hundred tons of shielding to get rid of everything else. The problem with this type of experiment is not that it’s difficult to detect these particles, it’s that it is so easy to detect every other type of particle at the same time, and you’ve got to find a way to exclude those and filter through only these interactions.

There are experiments going on in different places in the world, particularly in Middlesbrough . Years ago, when this began, I was part of this underground physics detector project, and was the theoretical spokesperson for it that played a role in getting the money for them. It was a rather strange business. You may remember the scandals over child abuse supposedly, the Marietta Higgs case in Cleveland , and there was a local businessman who was anxious that Cleveland should be known for something other than the Marietta Higgs scandal. He saw a programme on television about supernovae and detecting particles from them and thought that he wanted his name to be used for detecting particles.

The experiment is about a mile underground, and being a mile underground, you filter out most of the cosmic rates and other processes that would trigger your detector spuriously. The degree of precision required in these experiments is quite fantastic. You could not use solder anywhere in the entire facility. The natural radioactivity from a piece of solder would be bigger than the signal that you’re trying to detect. For the shielding, ideal material is lead, but very old lead that has lost lots of its natural radioactivity, the older the better. They have a mixture – there’s some lead from a sunken Spanish galleon in the Pacific, which was some of the oldest lead availability, and believe it or, also the lead from the old roof of Salisbury Cathedral. Roman lead is no good unfortunately; it’s contaminated with thorium, which has a very high natural radioactivity. So this project is gradually improving its sensitivity and aiming to be able to detect dark matter particles directly eventually if we are indeed immersed in them, and we might hope in the next three or four years to have a definitive answer as to whether we are indeed sitting in a universe full of these Weakly Interacting Particles.

There’s one Italian experiment that claims to have detected them already, but nobody else believes them, so there’s a lot of serious argument about that. I think almost certainly they have not.

When it comes to looking at universes, the expansion of the universe, as we’ve seen, gives us another way to tell whether the universe has got more or less than that critical density. So remember, universes that have less than the critical density expand much faster and seem to be heading to expand forever, so may be if we measured how fast the universe is expanding, we could tell something about how much material it must contain.

There are different sorts of universe. A universe can expand and then collapse. One can keep on expanding forever. One can be critical, just in between. But observations of our universe have revealed in the last few years that it not only seems destined to expand forever, but it’s accelerating in the process. So it’s one of th0se universes that has almost exactly the critical density, but is expanding at an ever-increasing rate.

So what’s creating that acceleration? We said that there’s a great mystery about 73 percent of the universe and what is it. It’s what people have come to call dark energy, that there is a form of material in the universe, or energy in the universe, that has an anti-gravitating effect on its expansion. If you want to think of it as a fluid, it’s a fluid that has a negative pressure. The pressure is equal to minus its density times the speed of light squared, and it is this mysterious material which is the predominant form of energy in the universe, right up at around 73 percent. It anti-gravitates because, in the theory of gravitation, what it is that gravity grabs on to and feels is the combination of the density plus three times the pressure, one pressure for each of our three dimensions. But when material has this funny equation of state, this changes the sign – we get minus three density plus the density; it’s like having minus twice the density. This material, because of its negative pressure, has a tension, anti-gravitates, and the great unsolved problem really in cosmology at the moment is to definitely determine what is the identity of this material. So what is it? Why does it have this particular abundance? Why had it come into play to accelerate the universe today? If we’re physicists, we understand its character rather well. It’s what we might call the zero point energy of the universe. It’s the sort of ground state base irremovable energy of the universe. But what is mysterious about it is why it has precisely the value that it does, the value that just allows it to come into play after the universe is ten, eleven, billion years old, and as a result, it dominates completely the content of our universe today.

© Professor John D Barrow, 2004

This event was on Thu, 21 Oct 2004

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login