Not just about numbers

Share

- Details

- Transcript

- Audio

- Downloads

- Extra Reading

An overview of different types of mathematics and its applications. What is mathematics and why does it 'work'? A look at the way mathematics can tell you things about the world that you cannot learn any other way: how computers have extended the reach of humans, the simple nature of many 'hard' problems, how to win at dice, modern concepts of chaos and complexity, and whether the Premier Football League is just a random process after all.

Download Transcript

NOT JUST ABOUT NUMBERS

Professor John Barrow

The series I am going to embark upon this year will look at what one might describe as fairly everyday applications of mathematics. So I will aim to try to make mathematics simple and interesting, and to show you that rather simple mathematics can indeed be very useful and very important in understanding things in the world around us.

In this particular lecture, I am going to jump about; it is going to be a potpourri of a number of different topics to show you some particular ways in which mathematics applies to things that you might not normally have imagined were mathematical questions. In the subsequent lectures, I will narrow in on a more specific topic for each lecture. So next time, I am going to talk about superficiality and surfaces - do you want to have a big surface, do you want to have a small surface, how is that engineered in nature, and what has mathematics got to say about it? But I would like to start today by having a look at what mathematics is, what it might be, and then some of its unusual applications.

What is mathematics? Is it something that we invent, like the games of chess or Ludo? Is it something we discover, like the summit of Mount Everest? Or is it something that we just programme and generate, perhaps by some machine or some process? These are the sorts of questions that philosophers agonise about, and you will find whole books in libraries trying to decide between these different definitions of mathematics. I like to rather short-circuit this question in a way that also sheds some light on perhaps the oddest fact about mathematics, and that is that it works; that it is so useful. So whenever you want to do something in science that is meaningful, it almost always involves mathematics. My wife is very irritated by this, but it is the case that somehow mathematics is so very useful.

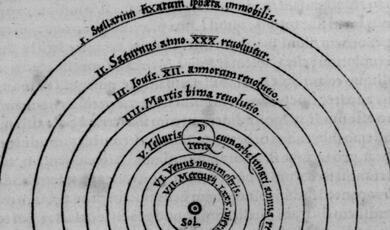

A very simple, useful definition and way of thinking about mathematics that cuts through these complexities is to think of it as the catalogue of all the possible patterns there could be. You will find some of those patterns in the buildings around us; others are on the string vests that you might be wearing under your shirt or on the soles of your shoes; other patterns might be patterns in time, showing how a sequence of operations develop, or they might be in the motions of the heavenly bodies. Some of these patterns are not actually very interesting or useful, and so we do not take much notice of them, but others are. So you begin to see that the mystery of why mathematics is so ubiquitous - why it seems to apply to everything in the world when we want to do something - is not really a mystery at all. There has to be some pattern in the world in order for us to exist, in order for there to be evolution and structure and life, and so the world must be mathematical because there must be patterns.

So there is no mystery as to why the world is described by mathematics, but what the real mystery is is why such simple mathematics - such simple patterns - are so far-reaching and can tell you so much about the workings of the universe. It did not have to be like that, that after just ten years of serious study of mathematics, you know enough to understand the reaches of elementary particle physics, the structure of black holes, and the expansion of the universe. The universe could have been much more complicated. There is no evolutionary reason why we need to have to understand the ultimate structure of the universe in order to have evolved on planet Earth. So there is a mystery that such simple patterns, such simple mathematics, can tell us so much, and that gives us some hope that, in learning a little bit about mathematics, and even coming to these lectures, that we might learn something that was really useful and could tell us a good deal about the world.

So mathematics is about patterns, and patterns have interesting properties. There are some rather simple patterns that you would never have guessed. So that a quarter of the value of p be expressed as this never-ending series:

pi/4 = 1 - 1/3 + 1/5 - 1/7 + 1/9 - ... etc.

Alternating minus/plus, minus/plus, where the dominator of the fractions, one-third, a fifth, a seventh, a ninth, go on forever, ever-increasing odd numbers. So you can be as intuitive as you like, you can be the deepest, thoughtful philosopher, but you would never have guessed something like that. You would never have intuited that formula from the nature of the universe. You have to do something systematic. You have to understand the nature of the patterns involved.

There are other patterns which are also very simple, but, so far, have resisted all attempts to prove that they are true. A very famous one is the so-called Goldbach Conjecture. This was supposed and hypothesised in a letter from Christian Goldbach, the great Swiss mathematician, to Leonhard Euler in 1742. He had noticed that every even number that he had tried could be written as the sum of two prime numbers; the sum of two numbers divisible only by themselves and the number one.

So here are a few: 6 is 3 + 3; 8 is 3 + 5; 10 is 3 + 7; 36 is 31 + 5, and so on. No one has ever found a counter-example, but there is still no general proof. Indeed, a few years ago, there was a million pound prize being offered by Faber & Faber, the publishers, for anyone who could prove this to be true. So if you think you have a proof, you might find a very nice reward.

Of course, if you have a super computer, you can explore all these possible pairs of numbers that could add up to the even numbers and test if they are both prime. You might have thought that the overwhelming power of computers would push this problem closer and closer to infinity. But it is not so. It is at the moment only known to be true for all the even numbers up to 6x1016. Here is the record:

389965026819938 = 5569 + 389965026814369

This is the biggest even number which is known to be the sum of two primes, such that all the even numbers smaller than that are known as well. It is really not very big. This took several years of computing on one of the world's fastest computers just to find this pair of prime numbers. It is showing that the numbers are prime that takes all the time. So again, patterns, problems, can be rather simple to state, but require an enormous amount of work to prove or demonstrate.

One of the things that modern mathematics shows is that it is not just about numbers, which is where this lecture's title comes from. If we look back to the time of Pythagoras, ancient civilisations the world over people were interested in the numbers themselves. They were thought to have a meaning: thirteen is unlucky; seven has some deep spiritual sense; forty is the number of pain and suffering and so forth. We do not do that sort of thing anymore, at least in mathematics, so we are not interested in the numbers themselves: we are interested in the relationships between numbers, the properties that numbers have with respect to other numbers. If you look at the back of a modern textbook in mathematics, you will find a collection of words that express this new trend - the relationships between things. So we have terms like mappings, graphs, transformations, programmes, functions, symmetries etc. They are all about relationships between one collection of numbers or patterns and another collection. What is the collection of things that you can do to a pattern that does not change it, or doubles it in size, or does something else to it? So a good deal of mathematics is about this study of relationships between numbers and other quantities.

Well, there are relationships that have rather simple properties, and some that have very unusual counter-intuitive properties. Here is a simple property: being bigger than. So if A is taller than B, and B is taller than C, then necessarily A is going to be taller than C. So this structure, sometimes called transitivity, is part of the essential nature of being taller than. It could not be otherwise. But not all relationships are like that.

An example of a relationship that does not have that property can be arrived at by supposing there are three people - Alex, Bill and Chris - and they are pooling their resources because they want to buy a car. Between them, they have to choose which car to buy. They have got three choices: there is the Audi, which I call A, there is the BMW, B, or there is the Cortina, C. They decide that the only fair way to do it is to have a vote and try and pick which is the popular choice. Alex decides his first choice is the Audi, second is the BMW, and third is the Cortina. Bill thinks he is James Bond, so he picks the BMW as his first choice, his dad had a Cortina so that is the second choice, and he has the Audi third. Chris is a classic car buff and his first choice is the Cortina, second choice is the Audi, and the third is the BMW. So what do we do with these preferences?

Alex prefers A to B to C

Bill prefers B to C to A

Chris prefers C to A to B

These results are rather interesting because with Alex the Audi beats the BMW, with Bill the BMW beats the Audi, and with Chris the Audi beats the BMW, so the Audi is preferred to the BMW by two to one. The BMW beats the Cortina with Alex and Bill but not with Chris, so the BMW is preferred to the Cortina by two votes to one. So you would expect that the Audi should be preferred to the Cortina, but it isn't. The Cortina is preferred to the Audi by two to one.

There are some deep theorems in mathematics that show that this sort of paradox infests almost every type of preference voting system that you care to set up. So you have to be very careful in the way that you transfer preferences to a voting system in this way. Later on, in one of the later lectures, I will probably unpack this a bit more, and you can see how the old systems of judging in the skating at the Olympic Games are almost founded upon paradoxes of this sort and were always heading for trouble.

If you want to make some money, you can make some dice that have this property. So here are four dice, and these are the numbers I have put on the six faces.

They are rather funny dice. The first one has got four fours and two zeros. Because we are mathematicians, we will put Pi on every face of the second dice, but you can put three if you really want. The next one, we have put e, again because we are mathematicians (2.7, and two sevens); and on the last dice, we have got three ones and five fives. So these dice are like the previous voting system: if you pick up any of these dice to play me with, I can always pick another one that will beat you. It does not matter which one you choose. So, if you play a long-term game, one dice will beat that one, another die will beat that one, and so on. So again, there is a non-transitivity. In the marketplace, long ago, if you knew this, you could make rather a lot of money.

This type of cyclic structure also infests voting systems of a different sort. Most mathematicians could boast that: "You show me an electoral set-up, tell me who you want to win, and I will construct a voting procedure that will get the winner that you want."

So suppose that we have got 24 people, and there are eight candidates, so it is like picking representatives for some committee structure or something like that. The voters are divided up into three groups of eight, and each of the groups of eight are asked to order their preferences of the candidates from A to H. The first group come up with this alphabetical ordering:

A B C D E F G H

The next group deviate a bit from this because they do not like A, so their preference ordering has B foremost and A last:

B C D E F G H A

The third group have this ordering:

C D E F G H A B

So if you looked at these results, and you say, "Who is the preferred candidate, who has won here?" your intuition would be that C looks to be the preferred candidate. It is first and second and third from the three groups. The other favoured candidates in some groups, A and B, are right down the bottom of others. So C really looks like the person that has the greatest support.

But there is somebody else languishing at the bottom here, H, that looks a complete no-hoper, but H's Mum really wants H to win. So H's Mum comes along and says, "Can you find a way?" So what I suggest is that we have a different sort of election - we will have like a tournament. So instead of just looking at this list, first of all, we will pit F against G. Well, F beats G in all three of the groups, so F beats G 3-0. Then we will play F against E. Here E beats F 3-0 again. At this point E is winning, so we play E against D and D beats E 3-0, so D is the winner so far. Then we will play D against C and C beats D 3-0. So next it is B's turn to play against C. Well, B beats C in the first and second groups but C beats B in the third group, so B beats C 2-1. So now we will play A against B. Well, A beats B in the first group, B beats A in the second group and A beats B in the third group, so A has beaten B 2-1. So A is the winner of the tournament so far. But now we will play A against H. A beats H in the first group, H beats A in the second group, and H beats A in the third group - which means that H is the winner of the tournament!

You see this sort of thing going on in all sorts of places in real life. Suppose that you and your friends want to go to see a movie but you need to decide what to see. Someone says "I want to see Sense & Sensibility." Someone says, "Oh, it's got that dreadful Colin Firth man in it - we can't go and see that!" Somebody says, "Well, why don't we go and see Silence of the Lambs?" and they say, "Well, we've got some children coming...they won't be able to get in. Why don't you go and see Star Wars instead?" So what you do is compare the next suggestion with the last one. So nobody ever sits down and says, "These are the twenty movies that we could go and see - let's all vote and pick the one which we should all go." Somebody suggests one, and then you play it against the next suggestion, then the next one, then the next one, and then the next one. So you could end up going to see a funny movie at the end that just one person may like and everybody else hates. So you actually do this sort of thing quite a lot in coming to decisions, and you have to be rather careful about it in a lot of situations, such as when you are considering interview candidates, where there is a danger that you will play one candidate off against the other in this strange order.

Next, I want to look at something to do with probability that is rather unusual. Some people regard this as the most astonishing thing they ever see in mathematics. It has a variety of names: sometimes it is called Monty Hall Trick and sometimes it if called the Three Box Trick. Monty Hall was an American TV presenter, who for many years ran a show called "Let's Make A Deal" and on this show, he introduced a game which members of the audience would participate in, and it is rather remarkable.

What the game consists of are three doors, and behind one door is a car for you to win, and behind the other two doors are goats, in the original formulation; so there is a winning door and there are two losing doors. You are asked to make a choice: which is going to be the door that you want to choose? So you make your choice and Monty Hall opens one of the other two doors. He can see what is behind the doors, so he picks one that has got a goat, and then he asks you "Do you want to stick with your original choice of door or do you want to swap?" The question then is what should you do. Many people think that it cannot possibly matter - "I've made my choice, no one's moved the car - he's trying to fool me; because he knows I've picked the car, he wants me to swap!" But the answer to what you should do is rather remarkable: you should always swap. You will double your chance of winning the car if you swap.

So I will show you this in two ways. Here is a rather simple way of looking at it. So suppose you have chosen the box that we will number 1, and then there are these two other boxes, 2 and 3. So what is your chance of having picked the car? At the outset, it is one in three: there are three boxes, each with an equal chance for you of the car being behind any one, which makes it a one in three chance. So what that means is that, because there is a probability of a third of being in each box, the probability of the car being in the other two boxes that you have not chosen is two-thirds. At this point Mr Hall opens a box that hasn't got the car in it. Let's say he opens box 3. So there was a two-thirds chance of the car being in boxes 2 or 3, he has demonstrated that it is not in 3 - the probability is now zero of the car being behind three - so the two-thirds probability is that it must be in 2, whereas your probability of it being in 1 is still one-third. So you should swap, because it doubles your chances of winning the prize.

Here is another little cartoon version:

So let us suppose that this is the set-up. The car is in the first box at 1, and there are the two goats in the second two boxes. Suppose you pick this first box, and you would have picked correctly. What he is going to do is to open a door and show a goat and invite you to swap. If you swapped, you would lose, because you had picked the car, so if you did this, you would lose, and you have a one in three chance of doing that.

Alternatively, suppose you pick the second door. Well, Monty Hall has got to open a door that has not got the car in it, and you have not chosen, so he has got to open the last door. So when he does, and he invites you to swap, you will swap to the car and you will win, so you will do that a third of the time.

A third of the time, you will pick the third box, which gives us the same situation as last time. He cannot pick the box with the car in or the one that you have picked, so he has to open the second box. So if he invites you to swap, you will swap to the first door and get the car.

So you have a one-third chance of making the wrong switch, and a one-third plus another one-third of making the right choice: you have a two-thirds chance of winning. So you should always swap.

A second example of probability telling you things that you might not have guessed regards football. I am sure you are all in love with the Premier League. The set-up in the Premier League is that twenty teams play each other twice, so because you do not play yourself, except in practice, each team plays 38 games, home and away. Well, let us simplify the whole thing, to save the bother of playing the matches, paying the players and all that stuff.

Suppose we imagine that this is a little game of a probabilistic sort. On the average, one football match in four is a draw. So we will play each game, either with a dice or on the computer, following a very simple rule: that there will be a one in four chance, a 25% chance, of it being a draw, and an equal chance of it being a home win or an away win. So that means a three-eighths chance of it being a home win, and a three-eighths chance of it being an away win (because three-eighths plus three-eighths is three-quarters, plus a quarter, is one):

Probability of a draw 1/4

Probability of a home win 3/8

Probability of an away win 3/8

So you tell your computer this is the chance, or you have an eight-sided spinner, and on two of the eight sides, you write "Draw", three of the sides, you write "Home Win", and three of the sides, you write "Away". For every game, you spin it, and you do it for every possible pair of games in the league, and you tot up the results to get a league table, and this is what you get:

For Arsenal supporters who feel a bit lonely, I have compared the actual results with the Premier League a few years ago, when Arsenal happened to win, but there is not a great deal of difference year on year.

So the team that comes out the top, I will call it team number 1, and the number of points that they get as a result of the spinner is marked in red on the right. This goes all the way down to the team at the bottom, who get 31.

The actual system of points that existed that year is marked on the far right in blue. There is nothing special about that year, but what you see about this, which is really remarkable, is that if you forget about the top three teams, who were Arsenal, Manchester United and Chelsea, the simulated league has pretty much the same points pattern as the real league, all the way down to the bottom. So if you forget about the top three teams, who are doing an awful lot better than a random process with a three-eighths chance of winning at home or away, everyone else produces a pretty good match to that random process. There is a little bit of difference in the middle, because the teams in the middle tend to be more likely to win at home and lose away. But teams at the bottom lose all the time and teams at the top win all the time. So this simple probability model gives you a very good picture of what is going on. You notice that, in some ways, the distance between the top team and the fourth team, in reality, thirty points, is almost the same as the distance between the fourth team and relegation. So Liverpool were closer to being relegated than they were to winning the League, even though they came in fourth place. So it is a highly uncompetitive situation at the top, and it all goes to show that, as they say, football is indeed a funny old game!

Let us now move on and talk a little about computers, which I mentioned at the beginning. We tend to have this view that somehow computers can solve all problems, and maybe you do not need mathematicians anymore and nor do you even need to think about things. You just tell the computer what you want to work out, and sit back and set it going. Well, you already saw how really the computer did fairly poorly at finding the prime numbers which added up to make even numbers, up to one 16-digits long. In the last 25 years or so we have seen computers helping mathematicians prove theorems by exploring huge numbers of cases which might prove the theorem to go bad, so they check many things. Computers are good at doing that, and they can explore things in a fairly random way. But it is interesting to ask whether there are certain things that are beyond the reach of computers, and whether there are things where computers might help you.

A famous case of computer-assisted proof is a subject dear to my predecessor as Gresham Professor of Geometry, Robin Wilson's, heart, and that is the four colour theorem. So this was a famous conjecture that has been around for a long time that if you have any map, for instance on of the states of the USA or of the counties of England, and you want to colour the states in such a way that you do not have adjacent states of the same colour, the smallest number of colours that you might need to colour any map of this sort is four. No one had ever found a counter-example to this, but it was not until 1972 that a proof was found, by a rather dull method, again, of elaborating and searching through huge numbers of possible counter-example maps.

So that was a rather classical, almost uninteresting, application of computer-think to doing mathematics. A more interesting type of general problem that we think about is a problem that we class as "hard" in a very specific sense.

We can divide mathematical problems into two collections: the easy ones and the hard ones. The easy ones are not the ones that you can necessarily do, but they are the problems where, if there are lots of bits to the problem - suppose you are doing your accounts for the taxman, and you have 26 sources of income, then, roughly, it will take you 26 times as long to complete all those bits of the tax form than if you just have one source of income. Here, each time you add another source of income, you will just increase the calculation time in proportion to the number of those sources of income. So this is a classic easy problem: as it gets bigger, the computation time grows in proportion to the number of parts of the problem.

A hard problem is much nastier: each time you add a new bit, the calculation time doubles. The doubling function, 2N, grows very fast...2, 4, 8, 16, 32, 64, 128... You are very quickly threatening the computer with massively long computations. This is the essence of a hard problem: N does not have to be very large before it can defeat the fastest computer on Earth. In some ways, this is a good thing, because all the encryption systems that are used commercially, when you buy a book at Amazon or you put your pin number into the hole in the wall outside the bank, what you are doing is putting numbers into an operation which if a fraudster wanted to find your pin number and access your account, they would have to carry out one of these hard calculations that would take so long - hundreds of years - it would be irrelevant for breaking into your bank account.

A classic and very simple example to give you an idea of the large numbers is a Monkey Puzzle. This was the sort of the poor person's Rubik Cube that existed when I was a child. It was one of those Christmas presents that you really did not want to get! So you were either going to get the Set Ten Meccano from Auntie Jill or the Monkey Puzzle of nine pieces, and you were just hoping it was not going to be the monkey puzzle of nine pieces.

So the monkey puzzle was just like the Rubik Cube. You have the tops and bottoms of little monkeys on each piece and they are in different colours, and you have got to put the puzzle together so that you join up whole monkeys of the same colour across the divides. So it is very simple and so it seems like it would not take you very long to do. If someone shows you the solution, you know instantly it is the solution.

But suppose you had to tell your computer to do this by some search and systematic process. Since you have got nine cards, you put one down and you have then got eight choices for the next one, seven for the next one, six for the next, and so on. Therefore, the number of ways you have got to choose the cards to put them down is 9 x 8 x 7 x 6 x 5 x 4 x 3 x 2 x 1, which we call nine factorial, and each card has got four orientations that you can put it down in. If there are nine cards, they are all independent of one another, so that is 4 x 4 nine times; four to the power nine choices. So the computer would need to work through 9! X 49, which gives 95,126,814,720 possible moves to check out. Which it can probably cope with.

So let us go to the deluxe puzzle that is five by five. That has got 25 cards, so we have got 25 factorial times four to the power 25 configurations to check (which is written as, 25! X 425). Well, that number is so large we cannot fit it on the wall here - we cannot even write it down. If we had a computer that was checking a million of those configurations every second, it would take 533 trillion trillion years to check them all!

Another one of these hard problems is the Travelling Salesman problem. This is a very famous problem and if you can solve this one, there is a million dollar prize waiting for you.

It is called the Travelling Salesman problem because we imagine that we have a salesperson who has got to visit all these different cities at these six nodal points, and these numbers are the distances between or the cost of travelling between. The problem is: what is the route you should take so you visit every city but you minimise the total cost or distance?

With an example like this, with a bit of trial and error, you can solve it - the shortest minimum cost trip is the one marked in red. But each time you add a city, the computational cost doubles, and soon it becomes prohibitively difficult and it is computationally too expensive to find the optimal solution. If you could solve this, you really would be very wealthy and very famous, because it appears that if you can solve one of these computationally hard problems, then you will be able to use that solution to solve all the others. So that is why there is a million dollar prize on the solution of this specific problem. It is not that people particularly want to travel around in this particular way, but it is the key that unlocks the solution of all the other hard problems.

You can see the relevance of this: if you are running British Airways, you want to find the shortest way with the smallest fuel cost, for routing all your flights around the world, through hubs and so forth. So this is just the sort of problem that you want to solve.

How big a problem of this sort have the fastest computers in the world solved? Until a few years' ago, I think probably now the biggest problem solved by a single computer is this one:

It is really very modest - only 3, 038 sites - but this took 1.5 years of computer search to find the answer on the world's fastest computer.

What the problem concerns is rather interesting. This is not a map of cities, but it is a piece of silicon chip, and a robot needs to wire the circuit so that you do not produce any short-circuits but you visit every single wiring point. If you can find the shortest and quickest way to do that, and you are producing millions of these chips every week in your company, you will be making a big commercial saving on your robot's time and effort. So that was the reason for this problem: find the shortest wiring trip which would visit every one of the terminal points on the chip. So this is a tiny object, just a few millimetres square.

The all-time record at the moment, which involved parallel computing, so you cheat by having many computers all working at the same time, is a real tour - it is a tour of Sweden that visits every little town, village and hamlet. There are more than 24,000 places to visit here and it took eight years of parallel computing to find that trip that goes around every city. So you can see that these problems do not have to be very big before they defeat you completely. So computers are not going to replace human mathematicians; they are not going to replace your ability to find clever ways to divide these problems up into smaller problems, to rule out whole collections of the things that you need to search. So a computer remains a tool, rather than a panacea, for these types of complex problem.

The other type of intractable problem, or problem that takes a lot of computing, is a problem that contains a property that you have heard a lot about in recent years - it is almost part of popular culture - it is the phenomena of chaos. My definition of chaos is that it is simply a dramatic sensitivity to ignorance.

So if you have a little bit of uncertainty in the state of the weather today, then in the next few days you may find that the weather forecast will be completely different to what you thought it was. It is not that we are poor at forecasting or we do not know how the weather changes. We do, rather well, but we do not precisely know the state of the weather at any single point. We have weather stations, say, 50 or 100 kilometres apart over the land, and more dispersed over the sea, where you can measure all the properties of the atmosphere you want, but in between the weather stations, there is sufficient uncertainty such that as you compute forwards, that uncertainty can grow catastrophically, and, just like in the hard problem, with every time step, the uncertainty doubles, so a little bit of uncertainty soon is catastrophically large.

Of course, in the banking system, we have seen something rather similar recently. If you put a little bit of uncertainty into what the structure of complex financial instruments are, what they are linked to and what the long-term consequences will be, the uncertainties can grow exponentially rapidly rather quickly when you are in the wrong environment.

This type of property of things has appeared in the movies, in Jurassic Park, and it is almost part of 20th Century life, but it was first conceived and recognised by James Clerk Maxwell back in the 1870s, in Cambridge, when he gave a little informal evening talk to some friends in Trinity College. Here he gave a very simple example, taken straight out of the Victorian Industrial Revolution. The example was the railway point: that if you make a little change in the point lever at one point, you will create a very large divergence between where the train would have gone and where it will now go.

We can get a better idea of this if we look at a very simple world in which this property exists. We can imagine a completely predictable world which just moves a point around the circumference of this circle, like the hand on a clock.

The angle the point moves doubles at each step. So if it starts at ten degrees, the next step it moves by twenty, then it moves by 40, 80, 160, 320, 640, and so on forever, so once it gets past 360 degrees, it wraps around again, just like a real clock. The remarkable thing about this world is that if you know the initial position precisely, after any number of applications of the law of nature, the doubling law, you will know precisely where the point is. So this universe is deterministic in principle, but it is not deterministic in practice, because in practice, there will always be some uncertainty in the initial location of that point on the circumference, and each time you apply the doubling law, that uncertainty will double as well. So if it starts as one degree, it goes to two, to four, to eight, to 16, to 32, to 64, to 128, 256 and then with the next step the uncertainty is bigger than 360 degrees, and although it is completely deterministic, you do not know where the point is on the circumference of the circle, because the uncertainty has grown so dramatically. In fact, on this example here, if you pick this uncertainty to be the size of a single atomic radius, after about forty applications of this rule, you would not know where it was on the circumference of this circle. So doubling moves very fast and amplifies uncertainty, and means that if, after forty steps, you still want to have a reliable position, you have to specify the initial position to fabulous accuracy, to a fantastic number of decimal places, and eventually, that defeats you.

The worry about this situation of course is that this might infect your system of predicting the future but you will not know about it. So you can go on predicting and you will have wonderfully precise looking numbers, to as many decimal places as you choose, and the algorithm will keep doing that, but after a while, it is all nonsense; it is just noise. So if you do not know that this is a property of your system, it can lead to real disaster, or even unreal disaster.

You will notice that the culprit in all of this is the number two. If that number was one, then the uncertainty would stay exactly the same at each step and would never grow. If that number was less than one, your prediction would get better and better; if it was a half, the uncertainly would halve at each step. But it is because it is bigger than one that things go so horribly wrong.

At this point, you might worry that the game is up for all mathematical prediction in the world, because of this problem. The molecules in this room, as they bounce around off the wall and off your head and off each other, have this property, and the uncertainty in a molecular collision does not increase by doubling each time; it increases by many hundreds, four or five hundredfold each time there is a collision. So you might wonder how we can possibly know anything about the gas in this room, because each collision between molecules has this awful amplifying property.

Well, we do know things about the gas in this room. If you were paying attention at school, in chemistry and physics, you may remember Boyle's Law, that the pressure times the volume divided by the temperature of a gas, like in this room, stays constant. Change the volume of the room, bring the wall in, put the heaters on, change the temperature and this quantity will still stay the same. It is a very simple relation. So how does it square with the fact that everything is chaotic?

Well, the quantities in Boyle's Law, the temperature, for example, is a measure of the average speed of the molecules, and the pressure is the average force per unit area as they hit the walls or they hit you, and the volume is a large scale property. So what this illustrates is that although the individual motions are chaotically unpredictable, their average properties need not be. Therefore, the average behaviour of the molecules obeys a very well-determined and predictable law. This is often, although not always, the case for chaotic systems. The average behaviour is slow and predictable and well-behaved, even though the individual ingredients are not.

The last topic I want to show you is to do with money and mathematics and it is price indices. What is the Retail Price Index? Well, somebody goes along, say on the 1st of August, and buys a collection of representative goods - a gallon of petrol, a kilogram of fish, a pint of milk, and so on - and tells you what the price is. They then come back to the same place in a year's time and does the same thing, and compares the prices. Therefore, you have to take an average to work out the price.

The interesting thing is that there are different ways of taking an average. What we call the average in ordinary parlance is where, supposing we buy N things, we add up the prices and we divide by the number of things, N.

So if we buy two things, 10p for a chocolate bar and 50p for a bottle of something else, 10 + 50 / 2, is 30p, so that would be the average, or the arithmetic mean, as mathematicians call it.

But there is another way of working out the average price of what you have bought and got in the basket, and it is what mathematicians call the geometric mean. Here, you multiply the prices together and take the nthroot.

(p1 x p2 x p3 x ...)1/N

So if you had two prices, you would multiply them together, the 10 and the 50, so you have got 500, then you take the square root, which is something which a calculator will easily work out for you

There are a number of interesting things to say about this. In America, for example, in the early Seventies, the American Government changed from using the arithmetic mean to work out their price index to using the geometric mean. Why would they do that? Well, there was one political reason: the arithmetic mean is always bigger than the geometric mean, so you always appear to have more inflation if you are basing a system on the arithmetic mean.

To see that, suppose you had just two prices, X and Y. Take X minus Y and square it, X2 plus Y2 minus two times XY, move them around and a half X plus Y is always bigger than the square root of XY. So the arithmetic mean of X and Y is always bigger than or equal to the geometric mean of X and Y. It is only equal when X and Y are the same.

0 < (X-Y)2 = X2 + Y2 - 2(XY)

So, ½(X+Y) > √(XY)

So that is the sort of thing politicians like: if you want to suppress the illusion of inflation, use the geometric mean - never use the arithmetic mean.

Suppose we make our index that is going to compare the mean in 2008 with what it was in 2007, to see if that number is bigger than one or less than one. If you started to do this with the arithmetic mean, you soon realise that it is a bit of a nonsense, because the prices that you want to use might be the price of milk per pint, the price of meat per kilogram, the price of petrol per gallon, the price of something else per ounce - they have all got different units, so if you try to add them together, it does not make any sense. It is like adding apples and oranges and cakes - they are all different, and so the unit of the arithmetic mean is not well-defined. You cannot add price per unit volume to price per unit weight.

But the geometric mean has a wonderful property which is not present in the arithmetic mean. Here is the failing arithmetic mean:

So the price for milk (which I have taken here as 42 pence) is a price per pint, beef is per kilogram and petrol is per litre. Try and work out what that is in the two years, in 2008 and 2007, you cannot work it out, because the units are different; but work out the geometric mean, and of course the units all cancel because they are multiplied together rather than added. This can be seen from looking at that sum here:

So in a geometric mean you can combine quantities with any units you wish, and if you use the same units the following year as you did in the first year, they will all cancel out and it works beautifully. So knowing something about means tells you something about prices, how to suppress inflation, but also how to use the best possible indicator to enable you to combine quantities that have all sorts of different units.

But I am afraid that time has run out for today. Next time, I am going to tell you about one particular topic - surfaces, boundaries, edges, what you should do if you want to minimise them or maximise them, and the examples you find in nature.

©Professor John Barrow, Gresham College, 9 October 2008

This event was on Thu, 09 Oct 2008

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login