The Next Big Questions

Share

- Details

- Text

- Audio

- Downloads

- Extra Reading

For each exciting advance or discovery that takes place in Astronomy, other just as important questions either arise or remain unanswered. In my last Gresham lecture I shall review what the near future might bring – the exciting space missions, satellites and telescopes – and the fundamental scientific challenges they are designed to tackle.

Download Text

01 April 2015

The Next Big Questions

Professor Carolin Crawford

Introduction

Lord Kelvin is alleged to have said: ‘There is nothing new to be discovered in physics now. All that remains is more and more precise measurement.’ His timing was a little unfortunate – he made this statement only a few years before the early 20th century ushered in new eras of relativity and quantum mechanics. Today’s astronomers are rather more cautious; we are only too aware that we have yet to comprehend what makes up a whopping 95% of the Universe. Given how recently we have discovered the effects of dark energy, or the huge variety of worlds beyond our own Solar System, we know that there are (to quote Donald Rumsfeld) plenty of ‘unknown unknowns’ out there: the things we do not know that we do not know. And indeed, many scientists actually bank on there being plenty of such exciting developments left to discover.

Astronomy is a discipline that brings together the sciences of maths, physics, geology, chemistry (and nowadays, even biology) in an attempt to tackle some of the most basic questions. It is also the science of extremes – giving us an opportunity to test the laws of physics under extreme conditions of density, pressure, time, distance, temperature, mass, size… extremes that we cannot begin to sample in lab conditions here on Earth. It is this potential to solve some of the most fundamental science that draws in young students, experienced researchers, and the general public to the subject.

In my four years of Gresham lectures, I have already spoken at length about some of the most obvious outstanding problems, and where observations are in dire need of further explanation and interpretation, and which are all areas of active research. For example:

· What particles can account for the mysterious dark matter that outnumbers ‘ordinary’ luminous material by a factor of five – matter which although it has mass (and therefore gravity), does not interact with electromagnetic radiation in any way. This is a focus for a whole variety of experiments around the work, from the newly restarted higher-energy Large Hadron Collider to the LUX detector (see The search for dark matter).

· The cause of the accelerated expansion of the Universe, which has been occurring since the cosmos was half its present age. This dark energy comprises 75% of the contents of the Universe (see The age of the Universe) but both its nature and consequences for the ultimate fate of the Universe are still a matter of speculation. This is an area which could be solved in the relatively near future by determining the regular periodicity in the density of the visible matter across the space on the largest scales (known as the study of baryon acoustic oscillations), gravitational lensing, and continuing to trace and extend the relationship between velocity and distance to further parts of the Universe. We may have to wait for such missions as the European Space Agency’s Euclid (planned for launch in 2020), or NASA’s WFIRST (due for launch in 2024) before we can expect a big breakthrough.

· The true manner in which energy is released to create the most explosive, but random, events in the Universe, such as the gamma-ray bursts and fast radio bursts (see The transient Universe).

· What the very first stars and the very first galaxies look like, how and when they form and how they change the intergalactic medium around them (see The early Universe). Any major advances in direct detections are probably best left to the next generation telescopes, such as the ESA/NASA James Webb Space Telescope (due for launch in 2018), which will work exclusively at infra-red wavebands.

· What the physical processes are, that generate the widely-observed narrow jets of highly-energetic charged particles squirting out along the rotational axes of black holes of all sizes (see Quasars).

And even closer to home, why so many exoplanet systems are so unlike our own (see Exoplanets… and how to find them).

How discovery happens

Although having such ‘big’ questions to answer motivates so many of us in our research, perhaps sometimes the biggest challenge for any scientist lies in perhaps in asking the right questions in the first place, and how to select which we believe we have a hope of solving. In my (admittedly, biased) view, the big developments in astronomy are driven more often by the observations than the theory. For example, the largest step in understanding and proving ideas about the structure of the Solar System had to await Galileo’s observations of the phases of Venus, the mountains on the Moon and the moons of Jupiter. It is always new technological developments – in telescope design, detectors and data analysis and collection that enable the discoveries that in turn stimulate the advance of Astronomy. This is why astronomers always have an eye on how to build more powerful telescopes, to see fainter, further out into the Universe and then to be able to study what we do see in more detail (see Large telescopes and why we need them).

New discovery space is opened up not only by technological advances in the telescopes and detectors but as new windows in waveband are explored; and much of the advances in ‘new’ astronomies such as infra-red, X-ray, ultraviolet and gamma-ray astronomy had to await progress in launching telescopes and detectors above our atmosphere. Most of the electromagnetic spectrum has now been revealed to observation. The next revolutionary frontier is expected to be ‘time domain’ astronomy (The transient Universe) with large survey telescopes mapping changes in the sky from one night to the next. Currently we have the Gaia satellite mission monitoring the whole sky, and along with future telescopes such as the LSST and the SKA this means wewill no longer have to rely on chance to be looking in the right place to catch a transient event such as a supernova exactly as it happens.

It is also important to be able to recognise a major discovery when it happens - knowing what is ‘normal’ makes it possible to be able to recognise that you have observed something unusual and ‘new’. Sometimes those data that seem to make absolutely no sense in terms of what you were expecting to observe that have the potential to completely change the current paradigm. For example, the first exoplanets were discovered because of a regular variation in the pulses emitted by a neutron star, and the first signal of an exoplanet around a normal star could only be interpreted if it were one so completely unlike any in our own Solar System in terms of its mass, size and location. In this way luck has always had a role in the progress of all science, including astronomy – right back to the discovery of new wavebands (such as X-rays by Rontgen in 1895 and the infra-red by Herschel in 1800); the discovery of pulsars by Bell and Hewish in 1967 (where the recognition of a signal as ‘not normal’ made all the difference); and the accelerated expansion of the Universe by Perlmutter and Schmidt and their collaborators who were initially seeking to measure a deceleration.

Unification of the forces

There are, of course, regions of the Universe that will remain hidden from us for the tangible future, despite all advances in technology and facilities - regions that we know to exist, but which we cannot explore properly without a coherent framework of physics. Here I refer to regions containing extreme states of matter, because huge amounts of material are squeezed into quantum-sized volumes, such as will be found in the tiny, ultra-hot version of the Universe that exists just after the Big Bang, and at the core of neutron stars, and black holes. Although we know that atoms break down into the most fundamental of elementary particles (known as quarks) under conditions of extreme energy, it is quite possible that at even greater temperatures and densities (billions of times greater than we can measure in a laboratory situation) matter might change into a completely new form which obeys different laws of physics. Such regions remain inaccessible not only to observation but to theory because of the difficulty we have in ‘unifying’ the forces. For many years astronomy remained separate from much of mainstream physics, as it relies on the laws of general relativity which accurately describe gravity, the dominant force on cosmic scales; but these laws are incompatible with those that describe the quantum world. The difficulty thus arises where we have very powerful gravitational forces operating over a short distance.

Ideally, scientists would prefer to use a single theory for all situations, rather than employing separate descriptions of the relativistic and quantum worlds. Such a theory has proven elusive, despite the many lines of theoretical progress that are being explored. There are difficulties inherent in the way that gravity is a continuous and embedded property of space-time, whereas the other forces operate in discrete packets or quanta. But now the resolution of astronomical problems of dark matter and dark energy will bring both astronomical and quantum disciplines into direct conversation, and thus they hold the greatest potential for indirectly solving the question of how we unify the forces.

Are there additional dimensions?

One such attempt to unify the relativistic and quantum – known as ‘M-theory’ – predicts there may be even more inaccessible regions of the Universe, where further dimensions lie out of our reach. Beyond the three dimensions of space that we can readily perceive, and the dimension of time which is necessary to fix the occurrence of any event, there could be several other dimensions. It is possible that these extra dimensions are coiled up, squeezed into the tiniest (ie subatomic) scales that we cannot access, and that we shall be unlikely to be able to ever detect them directly. The question is whether we might ever be able to detect their presence indirectly: one suggestion is that gravity is so much weaker than the other fundamental forces precisely because it alone of the four forces leaks into the extra dimensions. Thus if it were mediated by a messenger particle like the other forces, hypothesized as the ‘graviton’, it should be possible to create such gravitons in high-energy particle collisions. These could then be traced only through an imbalance in the energy and momentum measured into and out from the collision event, and the deficit assumed to be carried away by a graviton as it escapes rapidly into one of the extra dimensions. The theory predicts that heavier versions of all standard particles exist, so another approach could be the discovery of these massive counterparts in high-energy collisions in particle accelerators.

What triggered the big bang?

Only when we have a unified framework can we begin to tackle one of the most basic, yet currently the most speculative, questions, of what came ‘before’ the Big Bang; and how the mass and energy of an early Universe could erupt spontaneously out of nothingness. The M-theory that proposes that our Universe is only a 4-dimensional pocket within a much greater multidimensional reality, suggest that it could have arisen out of the collision of two such multidimensional spaces, which produces an eruptive expansion in only some of the possible dimensions. (Though of course it does beget the question about when and how the pre-existing spaces came about, and how often they might be expected to collide.) Scientists are working actively on these ideas - and many other models besides, such as string theory - trying to see if they can predict the consequent period of inflation. But all of this is still very far from what might be realistically regarded as ‘normal’ science, as it is so far removed from anything that can as yet yield observable consequences that can be compared to observations and collected data.

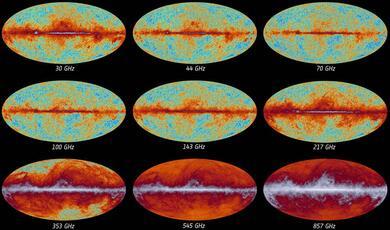

Regardless of what triggered the original event we refer to as the Big Bang, the very first phases of the Universe still remain hidden from us. The earliest light we can see is, of course, the light from the cosmic microwave background, released when the first atoms began to form, leaving space transparent for the first time (see Echoes of the Big Bang). At this stage the Universe was already 380,000 years old, and a lot had already happened that also for now must remain in the realms of conjecture as it is completely hidden from direct observation. Currently it can be explored only by theory and thought experiment in the hope that the theories can make predictions that one day prove observable. One epoch in particular that we are keen to seek evidence for is the idea that there was a flash of exponential expansion known as inflation. This marks the period when the Universe expanded away from a quantum size, by doubling in size every 10-34 sat least a hundred times over from when the Universe was less than 10-32 s old. We require such a period of rapid inflation to have happened to explain why the Universe appears uniform in all directions, and why the curvature of space is flat(see Echoes of the Big Bang ). In the last couple of years there were reports that an observational signature of the inflationary period had been discovered in the BICEP-2 experiment, from a pattern within the polarised light of the cosmic microwave backgroundindicative of gravitational waves set in motion by the incredibly fast expansion of space. New results from ESA’s Planck mission, however, have suggested that the pattern detected by BICEP is confused by the effect of intervening dust grains in our own Milky Way which can also produce a pattern of polarised light if they become aligned along the direction of magnetic fields within our galaxy. Planck observes more of the Sky in more colours, and thus can disentangle this contribution to the overall light better than BICEP. While this new conclusion does not mean that the theory of inflation is wrong – and if it were, we would have difficulty explaining many observed properties of the Universe! – only that we have not found observational proof of it yet. It is possible that there is still a remaining polarisation signal; it is too weak to be considered as a proper detection. Even though proof of inflation has receded for the time being, many experiments continue to collect improved data, hoping to hunt it down unambiguously in the near future.

Where is all the anti-matter?

The problems do not just cease after the period of inflation. When the Universe is a trillionth of a second old, and the temperature still about 10 trillion degrees, the Universe is full of energy rather than matter, filled with virtual particles rather than real particles. The energy available determines which pairs of particles and anti-particles are created, and they rapidly return to pure energy when they annihilate each other. But this picture still leaves another big question – that of why then there is still ‘stuff’ around us.

Everything in our Universe is made of atoms, and the protons, electrons and neutrons that they are comprised of. Anti-matter is a mirror image of this normal stuff - the same in every way, but with all the electrical charges reversed. According to our current ideas, there should have been so much energy in that initial millisecond or so after of the Big Bang, that numerous pairs of particles were created, each pair consisting of a particle of matter, and another of its antimatter counterpart. The ability to create these particle pairs diminishes as the universe expands and cools, and the amount of energy available drops. If a particle of matter meets its antimatter partner they annihilate each other to turn back into pure energy. We would thus expect that as matter and antimatter should have been created in equal quantities, by now all the matter should have long since been annihilated through encounters with antimatter to leave only energy – instead of the stars, galaxies and clusters we actually observe! As this is clearly not the case, either the two counterparts have yet to encounter each other, suggesting that remote regions of the cosmos are very much polarised into reservoirs of matter, and of anti-matter, which have both yet to meet. However, such an idea is at odds with the evidence that no part of the Universe seems to have radically different properties from another. Alternatively, if could be that more matter was created than anti-matter in the first place, and thus that although the possible annihilations have happened, the matter and antimatter did not cancel each other out. One of major aims of the new LHC is that in trying to reproduce the high-energy conditions as close as possible to those in the early Universe; we can then search for any inherent asymmetry in the pairs produced that could mimic what might have happened in the Big Bang to leave us in a matter-dominated state.

The multiverse

To add to the confusion, many scientists are now exploring one very fashionable (and as yet, completely hypothetical) concept known as the multiverse, where the Universe we occupy and observe is only one of an infinite set of possible parallel, or alternate, universes that all patchwork together to form the full multiverse, These could be a series of universes that are cyclic in time, or they could arise out of regions of restricted inflation occurring between multidimensional spaces. Theories of eternal inflation propose that most of the Universe continues to grow through inflation, leaving behind distinct bubble universes in pockets that drop out where and when this inflation ceases to operate. These other universes could completely resemble our own with similar laws of physics, but perhaps evolving from very different initial conditions – or they could be Universes operating under completely different fundamental laws of physics.

For some, the multiverse theory emerges naturally from the anthropic principle, ie the assumption that among infinitely many possible universes we will naturally occupy the one that is, by chance, finely-tuned to support life. It seems unlikely that we could ever be able to confirm or deny whether we are part of a multiverse, and indeed many people argue that such speculative matters are more philosophy than ‘true’ science that yields testable predictions. The ideas explored in experimenting with the metaphysical questions may well yield great dividends for our understanding of physics, but only in decades (or even centuries) to come. Astronomy is truly a ‘blue sky’ science, where most fundamental breakthroughs only begin to leak through to make an impact on everyday life much further into the future.

The observable universe

To verify proposed answers to the big questions we need data, which in turn is essentially limited by what we can observe. Not only are we limited by the available technology employed in our telescopes and detectors, but the amount of stuff we can observe is still fundamentally restricted. First of all, there is the big problem that only about 5% of the Universe is actually luminous. But then the amount of that light we can ever hope to see is also limited by the expansion of the Universe, which is currently occurring at a rate so that distances in the universe stretch by 0.007% about every million years…and this rate of expansion is changing with time as well. This is a cumulative effect, which adds up rapidly such that by the time parts of the Universe are about 1010 light-years apart, they are then moving away from each other faster than the speed of light. The Universe is so big that there are plenty of regions that are now moving away from us faster than the speed of light. This is perfectly permissible - although no signal, information or matter can move through local space faster than the speed of light, space itself is able to stretch at this rate and more.

Observable horizon

We observe any galaxy through the light that it emitted billions of years ago. Even if a galaxy is in reality receding from us faster than the speed of light now, the light we see dates from an epoch when it was not moving away from us faster than the speed of light. Even though space has been stretching in all the while that the photon has been travelling towards us, it has been able to outrun the expansion of space to reach us (the light does not care about what happening to the galaxy it has left behind). But it is very different for any light that is leaving the galaxy now, as it will have to cross a vastly wider expanse of space to get to us, and it can no longer outrun the expansion of the Universe. For each and every galaxy receding from us, there will be a point (maybe billions of years in the future) when there will be a very last photon that can make it through to us. We have no suggestion that the expansion of the universe might cease or reverse. Thus an increasing portion of the universe will become invisible - if are going to be watching distant galaxies billions of years in the future, there will come a time when each will simply fade and disappear from sight from us in turn, the furthest fading first. Already there are large portions that are already too far away for their light ever to reach us, and the edge of this ‘observable horizon’ is the distance at which the expansion currently is the speed of light; and our observable horizon will shrink closer and closer to us over time.

Currently the observable horizon is huge, so this is not an immediate concern! But within this observable horizon, currently we still rely on the detection of light in order to study what lies beyond the edge of the Solar System. Even the distribution and amount of the invisible components of our universe, such as dark matter and dark energy and compact objects such as black holes, is inferred from the way that they pull and push around the radiating matter. But advances in the next few decades may give us other ways to observe the contents of the Universe… ways which do not rely on light.

Gravitational waves

Light waves are created by moving electric charges. Gravitational waves are caused by masses in motion – and they carry information about the change of a gravitational field with time. They arise naturally out of a wave solution to the field equations for General Relativity: GR describes gravity as the way matter moves through space that is warped due to the presence of massive objects within it. A stationary mass creates a fixed warped shape. But if the mass begins to move, the curvature of the surrounding space-time has to change, and disturbances to it spread outwards like ripples on the surface of a pond expanding away at the speed of light, and carrying away the information about the change in the shape of space. Unlike other kinds of waves, gravitational waves do not travel "through" space-time as such, but they are travelling distortions in the geometry of space itself. To create a gravitational wave, a mass has to move and to accelerate: changing the rate of motion or the direction of motion, or both. This motion, however, cannot be spherically or cylindrically symmetric, which rules out spinning, expanding or contracting. For example, a dumbbell would not produce gravitational waves when spinning around its vertical axis, but would if it tumbled end-over-end. The heavier it is, or the faster it tumbles, the more gravitational radiation is emitted. How much gravitational radiation is emitted strongly depends on both the mass and the speed of motion, and the waves only become significant (ie supposedly detectable) when very extreme masses are moving close to the speed of light. Thus potential sources of gravitational waves could be: two massive objects (such as neutron stars or black holes) in orbit around each other and also if they coalesce and collide; any non-axisymmetric object that is spinning; or a supernova that expands unevenly. Cosmic gravitational waves should be emitted by, and carry detailed information about some of the most violent occurrences in the Universe.

The most immediate benefit is that when gravitational waves are detected, not only will another prediction from GR pass an important test, but it opens up the exciting possibility is that through these waves we could observe gravity itself directly for the first time. At the moment, we only observe the light radiated from objects whose movement is controlled by gravity. In contrast, gravitational radiation can carry information about the universe that we cannot gather in any other way, because unlike ordinary electromagnetic waves which are modified by intervening matter, gravitational waves can pass through solid objects, enabling us to penetrate regions that tend to be obscured by surrounding matter.

When a gravitational wave passes through a certain bit of space-time, it stretches space (and everything in it) in one direction, and then compresses space (and everything in it) in a perpendicular direction, producing a rhythmic signature of ‘stretch and compress’ as the wave passes by. The distance between free-floating objects will accordingly expand and contract, which gives us a way of detecting a passing wave, by repeatedly, is measuring the distances between a variety of locations. The expected observational signature will then be when distances momentarily change in part of an experiment in a way that is out of phase from another part of the experiment perpendicular to it. The problem is that the distortion such experiments are looking to detect is miniscule, and it is a huge technological challenge to detect them. Searches for gravitational waves have been made for five decades, but as yet no convincing evidence that they have been seen. The change may yet happen in the next few decades, for example, facilities due to come online include Advanced LIGO (an updated version of the Laser Interferometer Gravitational-Wave Observatory) which is scheduled to be operational in mid-2015; and the Evolved Laser Interferometer Space Antenna (eLISA) which is a proposed space mission concept chosen as the L3 mission with the European Space Agency with a tentative launch date in 2034. A proof-of-concept mission, LISA Pathfinderdesigned to demonstrate the technology necessary for a successful full mission is due for launch in September 2015: it will measure gravitational waves directly by using laser interferometrybetween three spacecraft flying in an equilateral triangle, separated from one another by one million kilometres.

Neutrino astronomy

Neutrinos are energetic, but electrically neutral, particles that carry barely any mass. They flood the Universe – the numbers estimated are over 100 per cubic cm - and are readily emitted from nuclear reactions such as fusion, or radioactive decay. If we were able to detect them reliably, they would give us an alternate view of the Universe. With light we are observing only the surface of cosmic objects. Neutrino astronomy provides a way to traces processes occurring within otherwise inaccessible regions: such as those that occur in the heart of ordinary stars; or in supernova collapse where neutrinos remove much of the infall energy within a few seconds; or those that were created at the time of the Big Bang to form a kind of alternative cosmic background radiation The path of the neutrinos from source to us is not impeded by intervening gas, dust and magnetic fields.

Even though there are vast quantities raining onto Earth every second, they are difficult to catch as they only interact weakly with matter. So far neutrino astronomy has been mainly limited to the detection of neutrinos both from the Sun, and from the supernova 1987a about 170,000 light-years away in the Large Magellanic Cloud. There is enormous potential from detecting much higher energy neutrinos, which are expected to be given off by active galactic nuclei and gamma ray bursts – any place where particles are accelerated to enormous energies. So far only a score of high-energy neutrinos have been detected which can be assumed to originate from extragalactic sources, but given that the probability of interaction within a detector increases as the square of the neutrino’s energy, these offer the most hope for detection in the short-term. Although neutrino astronomy is in its infancy, we expect that in the next couple of decades it will truly open up a completely new window onto the Universe

How do galaxies grow?

I have already detailed the challenges of looking for galaxies in the very early stages of formation (The Early Universe) but even once a galaxy is formed there are many challenges to understanding how it develops. In particular, the symbiosis of the galaxy and the supermassive black hole at its core is not well determined. First of all, we are surprised to find very massive black holes already fully-formed in the early Universe – such as one discovered recently that has managed to grow to a size of twelve billion solar masses within only the 900 million years of the Big Bang – normally where we see such supermassive black holes in the current Universe, we assume that so much mass has been accumulated by swallowing interstellar matter and merging with other large black holes over a long period of time. So not only is there the problem of creating such a black hole so quickly from the initial seed, but then we also have to account for there the tight correlation between the mass of a black hole and the mass of the galaxy that hosts it. Central black holes may vary in mass between 10,000 to ten billion Suns, but their mass is always about 0.3% of the mass of the stars in the galaxy, implying that the black holes grow in tandem with their hosts. As alluded to previously (The early Universe and in Quasars) trying to understand this relationship is not trivial, and the cause most likely lies with feedback mechanisms, which suggest that the black hole can in some way influence the development of its host galaxy - and which could indeed be vital for regulating the process of galaxy formation, by limiting the growth of the surrounding host. The exact method of how a black hole’s energy halts star formation is currently a matter of great debate. As the black hole regulates the size of its surrounding galaxy it will also starve itself of fresh fuel, and its own mass will be regulated in turn.

Star formation

It is not just a question of understanding galaxy formation properly, but it is also essential to deconstruct the process of star formation, which after all is the mechanism that controls the structure and evolution of galaxies, and their chemical evolution (see The Lives of Stars). Star formation is a process which is currently completely enshrouded by dust and hidden from our view. All stars are believed to form within clouds of gas and dust that collapse under gravity. New insight and progress is being made already with the advent of observations from ALMA (the Atacama Large Millimeter/submillimeter Array): first of all this is revolutionising the study of the astro-chemistry of space by tracing the distribution and complexity of molecules in the interstellar clouds that are the raw material for star formation; but ALMA also has the potential to detail the exact process of gravitational collapse into star formation. For example, how much of the accretion process is funneled from a disc, and can we actually detect the kinematic signature of infalling material? It is only within the infrared waveband where can we both see the light emitted by the protostar, but the surrounding material fuelling this collapse appears transparent.

Exoplanets and planetary formation

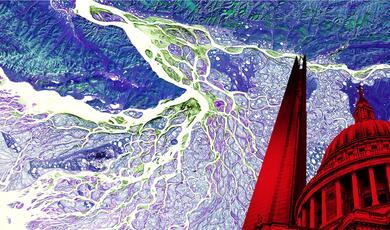

One area of active research is expected to yield big answers about planetary formation in general, and our own Solar System in particular over the next few years. Protostars are surrounded by discs of material that is then the raw ingredients for planetary formation.Over time, the surrounding dust particles stick together, growing into sand, pebbles, and larger-size rocks, which eventually settle into a thin protoplanetary disk where asteroids, comets, and planets form through collisions. Once these planetary bodies acquire enough mass, their gravity can dramatically reshape the structure of the surrounding disc. Planets sweep their orbits clear of debris to produce the gaps in the disc, corralling dust and gas into tighter and more confined rings. ALMA has taken stunning data of a slowly-spinning disc of gas and dust around a very young version of the Sun, HL Tau, a star less than a million years old. The young star is completely invisible in the optical waveband, because it is surrounded by a completely opaque envelope of dust and gas. The infrared image reveals structures that are for the first time comparable to the results of computer simulations of the formation and coalescence of material in the disc to form planets. Multiple concentric rings are seen to be separated by clearly defined gaps. However, this result was still a surprise, as such young star was not expected to be surrounded by a disc in such an advanced phase of planetary formation – and so it is already changing our views of the formation of planets in the galaxy.

Not only that, but ALMA has also detected molecular gas in several protoplanetary discs that are at a later stage in their evolutionary process. One would expect such gas to be short lived, so the only way for gaseous material to be present is if it is constantly replenished by shattering of icy bodies in impacts within the disc. For example, the molecular gas found in the disc around a nearby young star Beta Pictoris has a mass and location consistent with being produced by the recent destruction of an icy body with a mass similar to Mars.

How unusual is an Earth like ours?

These discs are ultimately responsible for the creation of the planetary environments in which life in the universe has become possible. Exoplanet research has moved on enormously even since my Gresham lecture a couple of years ago, and we now know of (at least) 1900 planets in 1200 planetary systems, with plenty more exciting candidates awaiting follow-up confirmation.

From these exoplanets we begin to realise how our Solar System may be anything but ‘normal’. Cumulative results from the Kepler Space Telescope suggest that the most common type of exoplanet in our Galaxy is of a type not found in our Solar System. About 75% of the planets discovered have a mass between that of Earth and Neptune, and they are referred to either as a ‘mini-Neptune’ or a ‘super-Earth’. Their composition is as yet unclear. They muddle the divide between what we would traditionally regard as rocky and gas planets, and one possibility is that they are the remains for smaller version of Neptune which have had most of the outer layers boiled away by the intense radiation from the very nearby parent star. Our search techniques are still not refined sufficiently to be able to detect a solar system like our own. Only future missions will really be able to probe the Sun-like stars in depth. Current searches focus much more on smaller, dimmer stars, because that’s where Earth-like planets are easier to find.

Another area where exoplanet research has advanced enormously – and which will continue to get more exciting – is that we can now not just discover exoplanets, but we can start to characterise their atmospheres.

As a planet moves between us and its host star, the way the starlight is filtered through the planet’s atmosphere reveals what gases are present, allowing determination of its chemical composition. Knowledge of these underlying molecules gives information about the planets’ composition and history. But now we can also learn about the climate and the weather on a select few exoplanets. Occasionally a lack of chemical signatures reveals that there must be a high-altitude layer of clouds that blanket the atmosphere, and which hides any information about the composition and nature of the lower layers. It is now possible to even obtain the temperature profile within the atmosphere across a planet - for example, WASP-43bis a planet the size of Jupiter but with double the mass and locked into an orbit lasting just nineteen hours around its star, with one side always facing inwards. Knowing the temperature maps one can then start to constrain circulation models that predict how heat is transported from the exoplanet's hot day side to its cool night side. Characterising the atmosphere of even such apparently bizarre worlds provides a laboratory for better understanding planet formation and planetary physics in general.

Of course, the chase is on to instead find molecules in the atmospheres of smaller, rocky planets that more closely resemble Earth. The smallest exoplanet for which water vapour has been detected so far is HAT-P-11b, about the size of Neptune, and which has been shown to be blanketed in water vapour, hydrogen gas, and other yet-to-be-identified molecules. It is this kind of observation that that can help astronomers to piece together a theory for the origin of these distant worlds. Ideally we want to diversify our knowledge of the composition of exoplanets to include as wide a range as possible; and we are still working our way down from the hot Jupiters, mini-Neptunes and towards the smaller super-Earths. In practice it is probably not until the JWST comes on line that we will be able to extend the search for signs of water vapour and other molecules to super-Earths. Detecting the presence of oceans on potentially habitable worlds is most likely a way off yet.

How rare is a habitable planet?

The statistics from Kepler data so far lead to an estimate that around one in five of Sun-like stars have Earth-sized planets in the habitable zone. If this survey can be considered to cover a representative sample of our Solar neighbourhood, it means that there are a total of about 8.8 billion Earth-size planets that lie in the habitable zone around Sun-like stars in the Milky Way. Of course, many of these could be inhospitable for other reasons – if they have a thick atmosphere that produces a strong greenhouse effect, or a paucity of comets reaching the planet to deliver water to the surface. Still the study certainly suggests that the chances are high that somewhere there could be a very Earth-like twin somewhere. With well over 100 Sun-like stars within 50 light years, the chance of a relatively ‘near’ Earth-like planet seems pretty high. If we expand our criterion to include smaller, cooler stars than the Sun known as red dwarfs (which are far more common), then we might expect about 15% of these red dwarfs have an Earth-sized planet at the right distance to have liquid water on their surfaces. Similarly cold gas giants like Jupiter could have large, habitable exo-Moons.

Habitability and Comets

Although life takes many forms, we do know that on Earth it requires liquid water. A rocky planet might still not be habitable – even if it lies in the habitable zone around its star - if it has none of volatiles (including water and carbon compounds) to form an atmosphere and liquid water at the surface. We believe that comet-like bodies bombard young planets at an early stage of their formation, and that this process is required to deliver most of the water and other molecules that might make a planet habitable. The bombardment by comets may in itself be an uncertain occurrence. Whether or not such volatiles are available and deliverable in other systems may be very fragile balance, depending not just on the presence of a cometary cloud, but also on the mass, quantity and location of any gas giants. Too massive a giant planet would pull in a deluge of cometary material from that system’s outer regions to promote such a heavy and continual bombardment that the planet is rendered inhabitable; but without a giant planet, perhaps insufficient material reaches the inner parts of the planetary system, or just forms a continual rain of comets over billions of years rather than one dramatic heavy bombardment event. Also, given the number of hot Jupiters we observe in close orbit around their host stars that must have migrated there during the formation of that planetary system, a further question is how migration might affect the pulling in the necessary icy supplies

In our own Solar System

Of course, even the delivery of water that makes our own Earth habitable is not a ‘solved’ question. Even though we hypothesise the presence of a giant Oort cloud, forming a reservoir of cometary material at the very outskirts of our Solar System (see Comets; visitors from the edge of space), its presence is only deduced from mapping the orbits of the ‘new’ comets arriving fresh into the centre of the Solar System all the time - we have yet to actually detect it. Voyager 1 would need 300 years to reach the inner part of the Oort cloud, and then take about 30,000 year to travel through it (though of course none of its instruments will be still functioning by then!). A vast cloud of very small, very faint and very cold objects spread in every direction around the sky would prove very hard to detect, except perhaps through possible micro-lensing events, caused when an Oort cloud object moves in front of a background star to magnify the star’s light for a moment. Perhaps the new generation of survey telescopes and space missions might yet prove successful in finding this signature. We have found systems analogous to the Kuiper belt around other star systems, but their Oort clouds may be too faint and cold to detect directly.

Even when you consider the origin of the water on Earth, that’s not straightforward. The most recent cometary material samples obtained by the Rosetta spacecraft currently chasing Comet 67P yet again finds that it the water ice it contains is of a different ‘type’ to that found in the oceans on Earth. Rosetta has discovered that 67P has a ratio of heavy to ‘normal’ water three times greater than that in Earth’s seas (where ‘heavy’ water is made from atoms of hydrogen that contain an additional neutron in their nucleus, known as deuterium). So far measurements of this ratio have been made for 11 comets, only one of which has a ratio that matches that found on Earth. Either the comets bringing water arrived from much further out in the primordial Solar System than the comets we have been able to rendezvous with 3.8 billion years later; or instead icy asteroids were the primary source. Although asteroids have a much lower water content overall, that water’s composition is a much better match to Earth. Even so close to home, it is sobering to realise how much we have yet to learn about our own planet.

There has never been a more exciting time in Astronomy. Ideas – from the rate of expansion of the Universe, right down to the architecture of the planets in our Solar System – have to be continually updated in the light of new data streaming in. Yet there is so much left to learn, to discover, and there will continue to be, given the forthcoming generations of exciting new telescopes, missions, surveys and the vast amounts of data that they will generate - with improved resolution and detectability that will enable us to delve deeper in all possible wavebands.

But not all ideas go out of date. And I shall end this lecture with the final paragraph of Edwin Hubble’s The Realm of the Nebulae, which remains as appropriate today as when it was written in 1936:

Thus the explorations of space end on a note of uncertainty. And necessarily so. We are, by definition, in the very center of the observable region. We know our immediate neighbourhood rather intimately. With increasing distance, our knowledge fades rapidly. Eventually, we reach the dim boundary – the utmost limits of our telescopes. There, we measure shadows, and we search among ghostly errors of measurement of landmarks that are scarcely more substantial.

The search will continue. Not until the empirical resources are exhausted, need we pass on to the dreamy realms of speculation.

© Professor Carolin Crawford, 2015

This event was on Wed, 01 Apr 2015

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham College plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login