Brain Computer Interfaces

Share

- Details

- Text

- Audio

- Downloads

- Extra Reading

Our brains are computers. What if we could enhance their processing power? Medical technology now allows for brain signals to be read and translated to reverse paralysis. Deep brain stimulation is also used to treat diseases such as Parkinson’s. Neural interfaces are already improving lives. How do they work? What’s next for our physical connection to digital technology? And what are the implications of having new hardware in our heads?

Any Further Questions? Podcast

Listen to our follow-up podcast with Professor Baines via the Audio tab or on Spotify & Apple

Download Text

Brain-Computer Interfaces

Dr Victoria Baines

24 October 2023

WARNING: This transcript contains a description of clinical trials on animals that you may find upsetting.

A nine-year-old rhesus macaque named Pager plays the video game Pong. Motivated by a banana smoothie dispensed through a metal tube, he controls a joystick that moves an on-screen paddle to where he anticipates the ball will go. Over time, the joystick is disconnected, but Pager continues to play the game. A Neuralink device has been placed on each side of his brain. More than two thousand electrodes implanted in the regions of his motor cortex that coordinate hand and arm movements record signals from his neurons. These signals are decoded by an algorithm which has been trained on data collected when he was using the joystick. The algorithm models the relationship between the patterns of Pager’s neural activity and the joystick movements produced. This enables the prediction of his hand movements in real time. Pager can now play Pong with his mind alone.[1]

This is just one example of a Brain-Computer Interface (BCI) – in this case, one which records and interprets brain activity to control external hardware. It may sound like something from science fiction, but it builds on a body of research and experimentation that goes back at least as far as 1924 when German psychiatrist Hans Berger first recorded brain activity using electroencephalography (EEG). It relies on a fundamental integration of medical science and Information Technology, of hardware embedded in or overlaid on the human body with software running on machines. While research and development have initially been focused on medical applications, there is considerable interest in the potential of BCIs not only to repair humans but also to augment them.

Twenty-six years ago, the then-Gresham Professor of Physic, Susan Greenfield, asked in one of her lectures, “Is the brain a computer?”[2] Where the focus of her inquiry was consciousness – increasingly topical in light of recent developments in machine learning and generative AI – for this lecture, we can at least observe that brains and computers are processors. One might say that the brain is the Central Processing Unit (CPU) of the central nervous system. It’s no coincidence that some of the most significant advances in computing in recent years seek to mimic the activity of the brain and nervous system. Artificial neural networks power machine learning and deep learning algorithms by ‘firing’ like neurons when output exceeds a specified threshold.

Repairing Humans with Tech

While some Brain-Computer Interfaces record brain activity, others stimulate the brain. Deep Brain Stimulation (DBS) is used to treat a range of movement disorders, most notably to control the tremors experienced by those suffering from Parkinson’s disease. Electrodes connected to a pulse generator interfere with the brain signals of the patient to disrupt the patterns that generate the tremor. DBS is also used to treat some people whose chronic nerve pain has not responded to other remedies.

Responsive neurostimulation (RNS) describes a ‘closed loop’ system in which electrodes can both record and stimulate without external intervention. This kind of response is particularly applicable to conditions where a fast-time response may be required – for instance, to interrupt an epileptic seizure. An increasing amount of research is also focused on possible applications of closed-loop BCIs to treat psychiatric illness and the potential for non-invasive interfaces using real-time functional Magnetic Resonance Imaging (fMRI) to be therapeutic tools in treating disorders such as schizophrenia. There is considerable interest in BCIs to combat the effects of dementia and Alzheimer’s Disease – for instance, to improve neuronal plasticity, or assist patients with cognitive decline to communicate. This is unsurprising, given that these are the leading causes of death in some countries. For other patients, such as stroke survivors, a restored ability to communicate has the potential to significantly improve quality of life by opening up opportunities to return to work.[3]

There have also been landmark innovations in neuroprosthetics, the science of replacing parts of the nervous system with devices. Cochlear implants, which help people with hearing loss perceive sounds, are some of the most widespread examples of this. Swiss institute NeuroRestore has developed a ‘digital bridge’, which restores motor control of the patient’s limbs after a spinal cord injury. Electrocorticography (ECoG) signals from the sensorimotor cortex are computed by a processing unit, which predicts motor intentions and translates these predictions into electrical pulses, which then stimulate the spinal cord to activate the leg muscles. A machine learning model – in this case, a recursive, exponentially weighted Markov-switching multi-linear model – enables the decoding algorithm in the processing unit to recognise and interpret accurately the brain activity associated with leg control.[4]

Where Medicine and IT Merge

BCIs that read brain signals typically comprise three basic processes: data acquisition, data processing, and device control. They rely on a combination of translational algorithms, which may be used at several points in the data processing stage. Once brain activity data has been acquired, it is subject to pre-processing to clean it, to separate the relevant signals from noise and other artefacts. A process known as dimensionality reduction then reduces the data to a smaller subset of the most relevant channels. Feature extraction converts the raw brain signals into features of interest. Feature selection pinpoints the subset of the features extracted that is most relevant to the function in focus. Classification then sorts data into labelled classes or categories of information. The patterns are then translated into commands and controls for the output application or device.

Wireless communication is also a key component of most BCIs. Neuralink uses Bluetooth to communicate with a phone or tablet. The NeuroRestore digital bridge is more complex, making use of a chain of wireless communication systems including Bluetooth, Infrared, and radio frequency. And this inevitably prompts us to consider the stability and security of those connections. Bluetooth is a Wi-Fi technology that works at very short range. Two devices need to be in close physical proximity to each other to communicate. This dispenses with the need for, for instance, a brain implant to be connected to the open Internet. Bluetooth is designed for devices to pair easily with each other. This can mean that unless a user has restricted its discoverability, a device can be open to pairing – and sharing information – with devices other than the one intended. Manufacturers of BCI hardware therefore need to be able to ensure that device pairing is secure. They can also encrypt data in transit so that even if it is intercepted, it cannot be unscrambled. This doesn’t, however, prevent access to the data if the other paired device – say, a patient’s mobile phone – is compromised. We also need to bear in mind that there will likely be cases where brain activity data does need to cover larger distances in real time over the Internet. In 2008, Duke University and the Japan Science and Technology Agency collaborated on a project in which a monkey in the US was able to make a humanoid robot in Kyoto walk using its brain signals alone.[5] Personal cybersecurity takes on a whole new level of importance when your physical well-being depends on it.

Wherever data is stored or processed we have the consideration of data protection and privacy. Brain signals are some of the most medically sensitive and personal data available. They are both critical to our functioning as individual humans and potentially indicative of our most private thoughts. We might assume that regulation on the processing of medical data applies only to healthcare providers. But increasingly, technology companies are collecting and processing this data. Neuralink is a commercial entity, not a hospital or university. Those of us who wear fitness trackers or who use apps that monitor our mental or reproductive health already share medically sensitive personal data with technology providers who are commercially driven. To some degree, there is a parallel in the pharmaceutical sector, where the service needs of patients and healthcare providers must be balanced against the commercial imperatives of drug developers and distributors. Regulation of medicines and medical devices is overseen by bodies such as the Federal Drug Administration (FDA) in the US, the Medicines and Healthcare Regulation Authority (MHRA) and the National Institute for Health and Care Excellence (NICE) in the UK. As cumulative developments in IT have been incorporated into healthcare, these regulators have designated software, including AI and machine learning, as medical devices.[6] So, when Neuralink wanted to start trials of its brain implants on humans, it applied for approval from the FDA. It received approval just last month and has issued an open invitation for people to join its patient registry.[7]

Human Augmentation

Perhaps inevitably, academic, and commercial researchers have been working on possible applications of BCIs beyond medicine. Neuralink’s mission statement is to “create a generalized brain interface to restore autonomy to those with unmet medical needs today and unlock human potential tomorrow.” As the company’s Pong trial demonstrates, BCIs may have a role in the future of gaming. The Internet is awash with tips for gamers to improve their reaction times. Removing the mechanical component of the response, as in the case of Pager’s joystick being disconnected, raises a further question. Would someone using a BCI to play a game entirely mentally be quicker than someone whose physical response relies to some degree on reflex? As BCI technology develops further, and if it becomes more affordable, we may well have a chance to find out. Given the financial rewards offered to elite gamers and the fact that younger generations with a clear incentive may be rather less squeamish about the idea of having an invasive implant fitted, we can expect to see some demand for it.

Military and defence institutes have also invested in neurotechnological research. In addition to neuroprosthetics for injured veterans, potential military applications for BCIs include control of aircraft simulations and drone clusters using brain activity, but also the boosting of memory function and mood alteration.[8] A report made public by the US government in 2019 envisages the Cyborg Soldier of 2050 as a human/machine fusion with ocular enhancements to imaging, sight, and situational awareness, auditory enhancement for communication and protection, and direct neural enhancement of the human brain for two-way data transfer, including brain-to-brain communication. [9]

Non-invasive BCIs are already used in sports training, monitoring focus and alertness, reactions to various stimuli, and identifying room for improvement. As in gaming, visual reaction time is a key factor in an athlete’s speed of response. If athletes were to receive implants capable of augmenting their performance in real-time, would this constitute an unfair advantage that would see them excluded from competing with non-augmented peers? One is put in mind of the debate surrounding Oscar Pistorius’ prosthetic legs, or Caster Semenya’s intersex status, both of which were considered by some to give them unfair advantages. Might we see the evolution of a class of competition specifically for athletes enhanced by technology?

Musical composition is a field in which BCIs promise to democratise technical ability. The Encephalophone, developed by researchers at the University of Washington, translates EEG signals captured from the scalp into synthetic piano music.[10] Brainibeats generates musical output from brain activity from two users wearing surface electrode caps.[11] The sound produced varies according to the degree of synchrony between the two users’ brain signals. At one level, these sound like opportunities to further widen musical participation to people with no practical instrumental skill. But for now, these applications are prohibitively expensive. As in other walks of life, the adoption of BCIs by those privileged enough to be able to afford them may contribute to a widening of existing equity gaps. Ensuring that BCIs are affordable and accessible and that there is seamless connectivity, will be prerequisites for their widespread adoption, just as they will be for metaverse technologies.[12]

Hybrid Ethical Considerations

The United Nations Educational, Scientific and Cultural Organization (UNESCO) is currently developing an ethical framework for neurotechnology. Its Director-General, Audrey Azoulay, warned in June 2023:[13]

Neurotechnology could help solve many health issues, but it could also access and manipulate people’s brains, and produce information about our identities, and our emotions. It could threaten our rights to human dignity, freedom of thought and privacy. There is an urgent need to establish a common ethical framework at the international level, as UNESCO has done for artificial intelligence.

Now, it’s worth stating that there are existing legal regimes and ethical frameworks that separately cover the practice of medicine, medical devices, data protection, cybersecurity, and the use of Artificial Intelligence. All of these and more apply to neurotechnology, which is also subject to simultaneous consideration of bioethics, medical ethics, business ethics, and information/data ethics. At an operational level, it’s clearly unreasonable to expect a neurosurgeon also to be a hardware and software engineer. Teams of people with different skill sets and specialisms are required to make a BCI work effectively, safely, and securely. This technical collaboration will need to be complemented by a holistic approach to ethics that can draw on all relevant disciplines. We can already see alignment between them. For example, the Hippocratic Oath’s requirement on the medical practitioner to abstain from harm translates to the principle of non-maleficence in AI ethics regimes.

In closed-loop BCIs, where the processing of recorded brain signals may trigger brain stimulation without external human intervention, responsible development and use of AI will be crucial. There are already multiple frameworks for this, of which perhaps the most widely recognized is the set of principles issued by the Organization for Economic Cooperation and Development (OECD). They are as follows:[14]

1.1. Inclusive growth, sustainable development and well-being

Stakeholders should proactively engage in responsible stewardship of trustworthy AI in pursuit of beneficial outcomes for people and the planet, such as augmenting human capabilities and enhancing creativity, advancing inclusion of underrepresented populations, reducing economic, social, gender and other inequalities, and protecting natural environments, thus invigorating inclusive growth, sustainable development and well-being.

1.2. Human-centred values and fairness

a) AI actors should respect the rule of law, human rights and democratic values, throughout the AI system lifecycle. These include freedom, dignity and autonomy, privacy and data protection, non-discrimination and equality, diversity, fairness, social justice, and internationally recognised labour rights.

b) To this end, AI actors should implement mechanisms and safeguards, such as the capacity for human determination, that are appropriate to the context and consistent with the state of the art.

1.3. Transparency and explainability

AI Actors should commit to transparency and responsible disclosure regarding AI systems. To this end, they should provide meaningful information, appropriate to the context, and consistent with the state of the art:

i. to foster a general understanding of AI systems,

ii. to make stakeholders aware of their interactions with AI systems, including in the workplace,

iii. to enable those affected by an AI system to understand the outcome, and,

iv. to enable those adversely affected by an AI system to challenge its outcome based on plain and easy-to-understand information on the factors, and the logic that served as the basis for the prediction, recommendation, or decision.

1.4. Robustness, security and safety

a) AI systems should be robust, secure, and safe throughout their entire lifecycle so that, in conditions of normal use, foreseeable use or misuse, or other adverse conditions, they function appropriately and do not pose unreasonable safety risks.

b) To this end, AI actors should ensure traceability, including datasets, processes and decisions made during the AI system lifecycle, to enable analysis of the AI system’s outcomes and responses to inquiry, appropriate to the context and consistent with the state of art.

c) AI actors should, based on their roles, the context, and their ability to act, apply a systematic risk management approach to each phase of the AI system lifecycle continuously to address risks related to AI systems, including privacy, digital security, safety and bias.

1.5. Accountability

AI actors should be accountable for the proper functioning of AI systems and for the respect of the above principles, based on their roles, the context, and consistent with the state of the art.

I have reproduced these in full so that you can see how they are especially important for the ongoing development of BCIs. We want all technology to be inclusive, human-centred, transparent, robust, and accountable. Any technology operating inside our bodies, particularly inside the powerhouse of our central nervous system and the home of our private thoughts, should necessarily be even more so. Consequently, AI deployed in medical devices is designated as ‘high risk’ in the new European Union AI Act and is subject to mandatory safety requirements.[15]

Of Invasion and Obsolescence

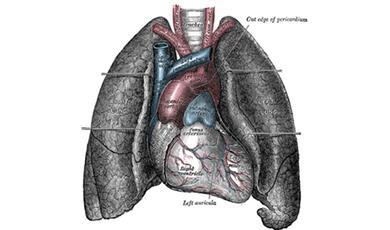

Different regions of the brain control different functions, as illustrated in Figure 2 below. Interference or stimulation in a specific region results in an impact on a specific function. Pager the rhesus monkey wouldn’t be able to play Pong with his mind if his implants were in a different part of his brain. In 1848, Phineas Gage, an American railroad construction worker famously survived an accident in which an iron bar was driven completely through his left frontal lobe. In the twelve years between the accident and his death, he was a living exhibit at Barnum’s American Museum and worked as a stagecoach driver. Recovering physically to a large degree, according to observers it was his behaviour that saw the most marked change. While some of the contemporary depictions of his inappropriate conduct have since been called into question, Gage’s experience is a striking example of just how localised the effects of a brain injury can be.

In one sense, this is good news for those of us who may be worried that BCIs will enable someone to take over our thoughts, central nervous system, and physical movements all at once. In practical terms, there are much cheaper, tried, and tested ways to influence our thoughts and behaviour using technology. As we have seen in our explorations of Fake News and cybercrime, disinformation, propaganda, online scams, and advertising are all flavours of social engineering – manipulation of our thought processes and beliefs, to cause us to take a desired action or to influence others.[16] For the time being, at least, humans don’t need BCIs to engage in or experience mind control.

As the OECD principles on AI ethics emphasise, ensuring that cutting-edge technological solutions are sustainable and robust for their entire lifecycles is a priority. In the IT world, we are familiar with the idea that start-ups with killer products can go bust and quickly disappear without a trace. Operating systems, web browsers, and computer and mobile phone brands have come and gone. But what happens if obsolete technology is attached or even implanted in your body? We have already seen cases of this.

In 2017, London-based company Cyborgnest launched NorthSense, a miniaturised circuit board designed to be permanently attached to the body like a piercing. By vibrating on the wearer’s skin, it told them when they were facing magnetic North. When the initial release sold out, the company described this as the birth of “a new cyborg community”.[17] The device has since been discontinued, with no support information available on the company’s partially functioning website. Then there are the more than three hundred and fifty visually impaired people who received Argus retinal implants, which gave them a degree of artificial vision.[18] In 2020, patients discovered that the developer, Second Sight, had run out of money and that their implants were no longer supported with updates, maintenance, or customer service. Three years later, there are reports that the acquisition of the company will at least mean that patients can source replacement parts.[19] Where an implant is simply nice to have and relatively easy to remove, as in the case of NorthSense, we may conclude that there is minimum harm done. On the other hand, patients whose quality of life is significantly improved by an implant, and who suddenly experience that artificial functionality ‘go dark’, may be at risk of serious physical or psychological injury. Ensuring that the digital components of BCIs and other implantable technologies as far as possible use open-source software, open hardware, and open standards is one way of increasing the likelihood that devices can be maintained, even if a developer ceases to operate or support them.

We will all have our comfort levels about the idea of invasive, and even non-invasive, brain recording and stimulation. But Brain-Computer Interfaces are becoming more advanced and more widespread. Before too long, the questions “Would I have a brain implant for medical reasons?” and “Would I have a brain implant for any other reason?” will no longer be academic. For many of us, our experience of this technology is already very real and even essential.

© Professor Dr Victoria Baines 2023

Further Reading & Resources

Acharya, A. 2021. Brain and Brain-Computer Interfaces: A Beginner's Guide to Neuroscience and Technology. Independently published.

Chari A, Budhdeo S, Sparks R, Barone DG, Marcus HJ, Pereira EA, Tisdall MM. 2020. “Brain-Machine Interfaces: The Role of the Neurosurgeon”. World Neurosurgery. https://doi.org/10.1016/j.wneu.2020.11.028

Gay, M. (2015) The Brain Electric: The Dramatic High-Tech Race to Merge Minds and Machines. Farrar Straus & Giroux. New York.

Gonfalonieri A. 2018. “A Beginner’s Guide to Brain-Computer Interface and Convolutional Neural Networks”. Towards Data Science. https://towardsdatascience.com/a-beginners-guide-to-brain-computer-interface-and-convolutional-neural-networks-9f35bd4af948

Shih JJ, Krusienski DJ, Wolpaw JR. “Brain-computer interfaces in medicine”. Mayo Clin Proc. 2012 Mar;87(3):268-79. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3497935/pdf/main.pdf

Singh H, Daly I. 2015. “Translational Algorithms: The Heart of a Brain-Computer Interface”. In: Hassanien A, Azar A. (eds) Brain-Computer Interfaces. Intelligent Systems Reference Library, vol 74. Springer, Cham. https://doi.org/10.1007/978-3-319-10978-7_4

Takagi Y, Nishimoto S. 2023. “High-resolution image reconstruction with latent diffusion models from human brain activity”. Proceedings of the Conference on Computer Vision and Pattern Recognition. https://openaccess.thecvf.com/content/CVPR2023/papers/Takagi_High-Resolution_Image_Reconstruction_With_Latent_Diffusion_Models_From_Human_Brain_CVPR_2023_paper.pdf

Neural ink's technical information can be found here - https://neuralink.com/#mission

More information on the work of the NeuroRestore centre can be found here - https://www.neurorestore.swiss/

Neurotechedu is an enthusiast-run website containing e-learning modules and a GitHub list of resources. While it is not peer-reviewed, it does serve as a useful compendium. http://learn.neurotechedu.com/

© Professor Dr Victoria Baines 2023

[1] Neuralink (2021) “Monkey MindPong” - https://www.youtube.com/watch?v=rsCul1sp4hQ

[2] https://www.gresham.ac.uk/watch-now/exploring-brain-brain-computer

[3] UC San Francisco (2023) “How a Brain Implant and AI Gave a Woman with Paralysis Her Voice Back” - https://www.youtube.com/watch?v=iTZ2N-HJbwA

[4] Lorach et al. (2023) “Walking naturally after spinal cord injury using a brain-spine interface”, Nature 618: 126-133 - https://www.nature.com/articles/s41586-023-06094-5#Fig1

[5] https://www.scientificamerican.com/article/monkey-think-robot-do/

[6] https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-software-medical-device

[7] https://www.reuters.com/technology/musks-neuralink-start-human-trials-brain-implant-2023-09-19/

[8] https://www.darpa.mil/news-events/2018-03-28

[9] https://apps.dtic.mil/sti/pdfs/AD1083010.pdf

[10] https://vimeo.com/209771378

[11] https://dl.acm.org/doi/10.1145/3544549.3585910

[12] For more on the metaverse technologies of Virtual Reality, Augmented Reality, and Mixed Reality, see my lecture, “What is the Metaverse?” - https://www.gresham.ac.uk/watch-now/metaverse

[13] https://www.unesco.org/en/articles/unesco-lead-global-dialogue-ethics-neurotechnology?hub=85592

[14] https://legalinstruments.oecd.org/en/instruments/OECD-LEGAL-0449

[15] https://www.europarl.europa.eu/doceo/document/TA-9-2023-0236_EN.html

[16] https://www.gresham.ac.uk/watch-now/fight-fake; https://www.gresham.ac.uk/watch-now/cybersecurity-humans

[17] https://www.northsense.com/

[18] https://spectrum.ieee.org/bionic-eye-obsolete;

[19] https://spectrum.ieee.org/bionic-eye

Further Reading & Resources

Acharya, A. 2021. Brain and Brain-Computer Interfaces: A Beginner's Guide to Neuroscience and Technology. Independently published.

Chari A, Budhdeo S, Sparks R, Barone DG, Marcus HJ, Pereira EA, Tisdall MM. 2020. “Brain-Machine Interfaces: The Role of the Neurosurgeon”. World Neurosurgery. https://doi.org/10.1016/j.wneu.2020.11.028

Gay, M. (2015) The Brain Electric: The Dramatic High-Tech Race to Merge Minds and Machines. Farrar Straus & Giroux. New York.

Gonfalonieri A. 2018. “A Beginner’s Guide to Brain-Computer Interface and Convolutional Neural Networks”. Towards Data Science. https://towardsdatascience.com/a-beginners-guide-to-brain-computer-interface-and-convolutional-neural-networks-9f35bd4af948

Shih JJ, Krusienski DJ, Wolpaw JR. “Brain-computer interfaces in medicine”. Mayo Clin Proc. 2012 Mar;87(3):268-79. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3497935/pdf/main.pdf

Singh H, Daly I. 2015. “Translational Algorithms: The Heart of a Brain-Computer Interface”. In: Hassanien A, Azar A. (eds) Brain-Computer Interfaces. Intelligent Systems Reference Library, vol 74. Springer, Cham. https://doi.org/10.1007/978-3-319-10978-7_4

Takagi Y, Nishimoto S. 2023. “High-resolution image reconstruction with latent diffusion models from human brain activity”. Proceedings of the Conference on Computer Vision and Pattern Recognition. https://openaccess.thecvf.com/content/CVPR2023/papers/Takagi_High-Resolution_Image_Reconstruction_With_Latent_Diffusion_Models_From_Human_Brain_CVPR_2023_paper.pdf

Neural ink's technical information can be found here - https://neuralink.com/#mission

More information on the work of the NeuroRestore centre can be found here - https://www.neurorestore.swiss/

Neurotechedu is an enthusiast-run website containing e-learning modules and a GitHub list of resources. While it is not peer-reviewed, it does serve as a useful compendium. http://learn.neurotechedu.com/

© Professor Dr Victoria Baines 2023

[1] Neuralink (2021) “Monkey MindPong” - https://www.youtube.com/watch?v=rsCul1sp4hQ

[2] https://www.gresham.ac.uk/watch-now/exploring-brain-brain-computer

[3] UC San Francisco (2023) “How a Brain Implant and AI Gave a Woman with Paralysis Her Voice Back” - https://www.youtube.com/watch?v=iTZ2N-HJbwA

[4] Lorach et al. (2023) “Walking naturally after spinal cord injury using a brain-spine interface”, Nature 618: 126-133 - https://www.nature.com/articles/s41586-023-06094-5#Fig1

[5] https://www.scientificamerican.com/article/monkey-think-robot-do/

[6] https://www.fda.gov/medical-devices/software-medical-device-samd/artificial-intelligence-and-machine-learning-software-medical-device

[7] https://www.reuters.com/technology/musks-neuralink-start-human-trials-brain-implant-2023-09-19/

[8] https://www.darpa.mil/news-events/2018-03-28

[9] https://apps.dtic.mil/sti/pdfs/AD1083010.pdf

[10] https://vimeo.com/209771378

[11] https://dl.acm.org/doi/10.1145/3544549.3585910

[12] For more on the metaverse technologies of Virtual Reality, Augmented Reality, and Mixed Reality, see my lecture, “What is the Metaverse?” - https://www.gresham.ac.uk/watch-now/metaverse

[13] https://www.unesco.org/en/articles/unesco-lead-global-dialogue-ethics-neurotechnology?hub=85592

[14] https://legalinstruments.oecd.org/en/instruments/OECD-LEGAL-0449

[15] https://www.europarl.europa.eu/doceo/document/TA-9-2023-0236_EN.html

[16] https://www.gresham.ac.uk/watch-now/fight-fake; https://www.gresham.ac.uk/watch-now/cybersecurity-humans

[17] https://www.northsense.com/

[18] https://spectrum.ieee.org/bionic-eye-obsolete;

[19] https://spectrum.ieee.org/bionic-eye

Part of:

This event was on Tue, 24 Oct 2023

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham College plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login