What Really Happened in Y2K?

Share

- Details

- Text

- Audio

- Downloads

- Extra Reading

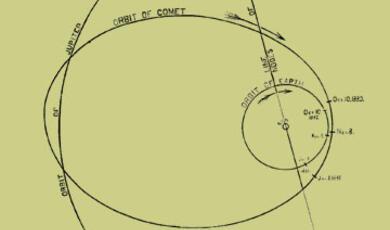

As the year 2000, 'Y2K' - approached, many feared that computer programs storing year values as two-digit figures (such as 99) would cause problems. There is a widespread belief that the millennium bug was a myth, invented by rapacious consultants as a way to make money. What was the truth? What, if anything, happened? Have we learnt all the right lessons?

Download Text

Part of:

This event was on Tue, 04 Apr 2017

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham College plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login