i-dentity

Share

- Details

- Text

- Audio

- Downloads

- Extra Reading

Identifying people is a fundamental skill and special areas of the brain are hard wired for recognising faces. Non-subjective artificial recognition of individuals has become increasingly important. The recent advent of computerisation for facial recognition can be used to identify a person on an image. More accurate identification can be obtained by analysis of the iris but this requires co-operation of the individual. Discrimination between people can also be undertaken remotely, by analysing their retinal images. Professor Marshall will describe these new techniques and their controversial applications.

Download Text

I-dentity

Professor John Marshall

2/12/2009

The subject of this lecture is identity. I like to think of this as the 'eye' in the 'eye', because, in literature, the eye is often referred to as the window on the soul, and yet the eye is our window on the world. When we look at someone, the very first thing we look at, as I will demonstrate, is their eyes. Eyes are incredibly important in terms of social acceptance and social behaviour.

Eyes have a very long evolutionary history. In very primitive animals, there were light-sensitive spots at a very early time, and you can see these still in animals low down the evolutionary scale, such as fan-worms and or jelly-fish. These eyes operated by detecting changes in levels of light. So if a fish comes anywhere near the fan-worm, the fish will effectively cut out the light and the fan-worm will shrink and hide. Therefore, at a very early stage it was determined, as an evolutionary sequence, that the ability to detect light and set up reactions in response to either increasing light or absence of light was clearly very advantageous.

Even around 500 million years ago, we saw huge evolutionary adaptation in the arthropods, and, a little later, in the insects, with the development of compound eyes. These were very interesting eyes because they had a lens in front of the light-detecting elements. This evolutionary end point was reached very early on and has been perpetuated for millions of years in the insect domain.

But, great idea as it was, it would not be terribly helpful to us. The reason is that eyes of this size would have to be about the size of a human person in order to achieve something like a tenth of the acuity that you and I have with our form of eyes. So the size of the compound eyes you would need would be effectively unusable, because you would break your neck every time you turned your head.

But, fortunately, there was another developmental sequence, which again occurred very early on, in the molluscs, and now we see something which is more recognisable as the sort of eye that you and I have. In the pearly nautilus, the eye did not have a lens and was in contact with sea water, but by the time we got to larger developments, such as the squid, it has now got effectively an iris, a lens, and a retina, just like a vertebrate eye, except that the retina is not the same way round as the vertebrate eye. Even in the simple snail, you can see this is quite a complex organ.

Once evolution has hit on a good idea, it tends to carry it forward and perfect it. So these sorts of eyes with lenses, really you can split into two: the front of the eye, with the lens and the cornea, is really concerned with forming an image; on the back of the eye, where you have transduction of the image and interpretation of the image being sent back to the brain. So, all vertebrates, from fish to amphibians to retiles to birds and mammals, all have an eye which is basically the same in terms of its component parts.

By the time you have a developed eye like ours, you now have something that is extremely useful in terms of your survival: fight or flight. Usually, complex eyes were also associated with complex ears, but if you actually look at the performance of these two, the eyes win hands-down. Its range of detection of light changes or image changes is miles. On a clear day, we can see the horizon, which is about eight or nine miles away, and you can see information on that horizon, so you can determine a very long way ahead whether you are going to run or not. Light travels at 186,000 miles per second. A laser will reach the Moon in 1.3 seconds. So it is a very fast radiation, and when we compare it with ears, they just cannot really compete. Their range is effectively only yards, and sound travels at only 770 miles per hour.

Once we have eyes and ears they become very important when you start living in communities, because here you have got to identify your friends, because you do not want enemies associated with you, and it would be helpful if you could collectively send signals. So, you can either twitch or turn, and that would send a signal, or you can perhaps make a noise and that would make a signal. So, in community animals, the ability to identify friend or foe begins to become important. When you reach the developmental state of man, and man began to become a social animal, hunter gatherer, or even minimal agriculture, you not only want to be able to determine your friend and your foe, now your language skills have got to become more profound. You do not want one grunt for danger and two grunts for okay, because you are looking for the possibility of division of labour, collectively, and so you need to now develop a more sophisticated language.

These things are incorporated in the genes, and indeed in our brains. So all of us, when we are born, are hard-wired for language. A baby is hard-wired for language, but you can imprint on that wiring whatever language you want. So a child born of English parents but brought up with Chinese foster parents will speak fluent Chinese, and vice versa. It is similar with faces. There are elements in the brain that are hard-wired for facial recognition, and like the linguistic hard-wiring, you can imprint different information on that basic hard-wiring.

As human beings developed and become more social, began to determine visual signals which were both recognition signals - e.g. I'm a member of your tribe - but were also differentiating signals - i.e. I'm a member of your tribe, but your tribe is different from other tribes - and so visual clues became, again, extremely important.

When larger groups developed, it became even more important. This is because you now want conformity and recognition of the group, and if you are in a battle, you want to know who you are going to bash over the head and who is not going to bash you over the head, so you need to be integrated in the group and, again, develop recognition amongst the group. If you are a smart guy, you also want, at a later stage, to have a degree of individuality, and so you will want to be seen as a part of the group but to be marked out as special in some way. A clear example of this is in the military, where there are different badges for rank.

Let us take another step and look at the problem of identification in towns. The larger the society and the bigger the group, the more complex the security in terms of recognising friend or foe. With the development of towns, this becomes even more complicated at night. You and I are exposed to a 24 hour cycle of light and dark: for approximately twelve hours, there is light and I can recognise you, and approximately twelve hours, there is dark and I cannot recognise you. So if I am living now in a community, security of that community becomes a particular problem at night.

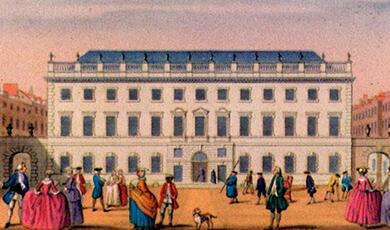

Thus we see, throughout Europe, the development of walled cities, and in cities such as London, the system of walls and gates became very complex, with a number of gates which were closed at night. It is fascinating to look back through history and see how, whilst I can still recognise you, things are fine, but when it gets dark, I do not want to know. If you actually look at English law you will see that crime of the night was recognised. If you were to kill a housebreaker, that is a stranger in your house during the day, you would be charged for killing that individual, because the law said that since it was daytime, you should have been able to recognise whether this was an intruder or not, and you should have been able to get help to restrain them. By contrast, at night-time, you could, certainly in 1766, kill a burglar with impunity, because you could not see who it was. It is in things like this that we see the importance of vision and recognition within our society.

This eventually led to a revolution in illumination in cities. Paris was one of the first to have communal street lighting, in 1667, and the other European capitals soon followed suit. London, by contrast, was one of the very first to use gas lighting, which was first used in Pall Mall. You would have thought that it would be thoroughly fantastic to have light so I can recognise everybody, but of course it led to problems. Being Pall Mall, the gentlemen of the day said it was restriction of their freedom, and the ladies of the night said it was a restriction of their trade.

But light is important and has continued to be important. Two hundred years ago we might have asked 'Do we need it?', but today the question is clearly 'We can't do without it?' Light is really an important element in terms of security and identity now because, biologically, we cannot really do it without it. The very few people whose voices we recognise is very limited.

So let us say we have got light. What do we do?

We can begin by looking at the work of a wonderful Russian physiologist called Yarbus, who was working in the 1930s, who devised a way of looking at eye movements in relation to objects.

If you look at the results that he found for the eye movements in scanning a face for one minute, you can see there is a concentration on the eyes and also on a spot a little bit down on the nose. If we extend that time, we see that the eye movements are constantly returning to the eyes and the nose, the central triangle of the face, with relatively few excursions round the edge. So, the first thing is facial recognition.

The brain is good, but you can fool it. There is an interesting example of this in a picture which shows a crowd. The picture is initially shown upside-down, at which point few people recognise anything special about the picture. However, when the picture is shown the right way up, people immediately recognise that John F Kennedy is a member of the crowd. It was the same information you have both times, but being upside-down, it was being presented to the brain in a geometric form that the brain could not recognise. So the system has to have information in a way that it can process it, but when it can, it is reasonably good.

Think of the problems of wanting to identify someone remotely. There is a famous picture of Anne of Cleves, which was painted by Holbein for Henry VIII, who loved the picture but absolutely hated the lady when she turned up. That was really the problem of the day, because, if you were a painter and spending hours with someone, you had to please the sitter, but at the same time, you had to please the patron, the person that was paying you, and you had to hit that balance, and many artists did not succeed. So this was data storage; it was the ability to store recognition information in one place and transfer it to another. Painting, in this way, was the beginning of transfer of identity information from point A to point B, and, on a temporal basis, over a period of time.

It was not until photography that we could have true mass storage. So now, we have the ability to trap a real image, keep it, transfer it and send it around. Facial recognition really led Alan Pinkerton of the Pinkerton Detective Agency to start collating a rogues' gallery, and this revolutionised security, because you could use these photographs as a very definite method of identifying suspects.

That was a great move, and it was really perfected by a Frenchman, Alphonse Bertillon, who developed a system of cards in 1879. So when the villain came in, they were photographed. There were a series of five measurements that were recorded on the cards for easy access, which were: (1) head length; (2) head breadth; (3) length of the middle finger; (4) the length of the left foot; (5) the length of the "cubit" (the forearm from the elbow to the extremity of the middle finger). For thirty years, this was the system which was subserving security and identification.

It really had a problem where an individual who was convicted and sent to prison and then a second individual in prison was identified, and the two of them had exactly the same parameters on the Bertillon system and their photographs were almost identical. It turned out they were identical twins. This really hammered this system and it took a long time to recover.

Even with the modern equivalent of identikit, it is amazing how poor you and I are at describing an image and transferring it to a system for identification. Even with a good descriptor, verbal, it is very difficult to put it into pictures. One individual can give rise to any number of differing images created from descriptions people give of them.

But let us put faces on the backburner for a moment and turn instead to information transfer in associated with an individual. If I have a message and I give it to you, you want to know am I the person that should have had the message and given it to you, and is the message I give you the message that I was given. Again, there are parameters of identification in that.

If you go back in history, we can read about Histiaeus, who wanted to get a message to the Greeks on Iona about Darius without Darius finding out. The story is that he shaved the head of one of his slaves, tattooed the message on the slave's head, and then waited until the slave's hair grew and sent him off. When he got there, he had his hair shaved, and clearly, he was the messenger and that was the message. But that was a bit dramatic, and not all of us have slaves. So, how did other individuals get round it?

In the past authenticity was shown with things such as signatures, seals, and, at a very early stage, fingerprints. In Babylon, 2000 BC almost, we had deliberate fingerprinting on documentation, to say this is the message and this individual that is delivering it is the correct person. If you look at Roman seals, they often were two-sided, with an emblem on one side and a fingerprint on the other. I do not know quite how they read these - they certainly had lenses so they could look at them, but I guess it was rather a case of subjective analysis.

But fingerprints were, in the early days, associated with illiteracy, and so there was a move to get away from them towards seals. We see seals throughout the legal system in this country, and many of them were actually imprinted with really elaborate signet rings. So, I have a message, and to say this message is from me, I imprint the image of my seal so that you know that this is real, and indeed, Sir Thomas Gresham, the founder of Gresham College, had that wonderful seal with the grasshopper.

But what about fingerprints? We see them in the remains of the Babylonian society of 2000 BC, but it was not until the 1800s that people began to think that these might be a very good way to identify a given individual. There were two major players in this field, Henry Faulds and Sir Francis Galton, who both claim it was really their idea. Faulds published a very interesting paper in 'Nature' in 1880 explaining how he did it, and Galton published a beautifully illustrated book, a little later, describing how he did it, but both of them came up with interesting algorithms to utilise fingerprints in order to recognise and identify an individual.

But fingerprints had problems. For instance, if you were to leave a fingerprint on a glass, someone could come along and lift it off using a piece of sellotape. They could them imprint that came fingerprint on whatever object they wanted, without your even knowing it, let alone being there. So the criminal fraternity knew that fingerprinting was a great idea, but that you could play with it.

Until now, forensic medicine is highly reliant on fingerprints. Why do I not like fingerprints as a method of identification? All of you here will have left your fingerprints somewhere in this lecture theatre. I could go round and collect them all and I could use them. So I do not like that element, and I do not really like the association with criminality, but nevertheless, until relatively recently, this was the ultimate identifier.

Now, during the last War, everyone was issued with identity cards. If you were a lowly citizen, it did not have a photograph. You just had your address, your signature and a number. But if you were slightly more important, not only did you have a photograph, but you also had your fingerprints. So, in the Second World War we were all registered, and people did not complain, but then, in wartime Britain, there were so many other things that you would want to complain about otherwise so this perhaps was not too much of a difficulty compared to others. Today, you and I all have identifiers: you have something you have, credit cards; something you can do, a signature; or something you know, a pin code. This is quite a secure identifier, but it does not necessarily have a photograph on it.

I want to show you with some shocking statistics. The UK has less than 1% of the world's population, but more than 20% of the world's CCT cameras, most of which are facial recognition cameras. The worrying thing about this is that in the types of footage we've seen on the television from CCTV, even the criminal's own mothers would not recognise them! The resolution is so poor. But if you put a revenue stream in it, so high resolution, it will pick out the hairs in your nose. So we have the technology, but we are not using the technology, but it is an open question as to whether using the technology to the extent that it is currently spread around the country is entirely a good thing?

But where are we today, in our post-9/11 world of heightened awareness about security? Well, these are things that have been used and could be used, showing the various factors involved with each:

Biometric

Accuracy

Error

Rate

Ease of Acquisition

User

Acceptance

Signature

Medium/Poor

1:50

High

High/medium

Voice

Medium/Poor

1:50

High

High

Face

Medium/High

???

High

High/Medium

Hand

High

1:500

High

Medium

Finger

High

1:500

High

Medium/Low

Iris

Very High

1:131,000

Medium

High

Retina

Highest

1:10,000,000

Medium

???

DNA

Highest

1:10,000,000

Low

Very Low

Signatures have been used for a long time but their accuracy is only medium to poor because the error rate is one in fifty. They have a high ease of acquisition, because it is easy to get a signature, which makes the user acceptance of this method very high, especially since all of us sign documents all of the time. Voices, again, only have an accuracy of around one in 50, and the ease of acquisition and user acceptance are also high like signatures. With facial recognition, strangely enough, we do not have good scores for their accuracy because the technology has changed dramatically recently. Again, it is very easy to get an image of a face, and we are not too unhappy about being photographed. Hands were used in the Australian Olympics to identify people. This was because they were easy to get, only about one in 500 were poor identifiers because of smudged and spoilt images, and user acceptance was medium. Strangely enough, fingerprints are not that accurate, and it has medium to low acceptance because people do not like touching things other people have touched, and they do not like being thought of as criminal.

But the more recent additions to this list include irises, and you might have seen the sort of parrot channel at Heathrow where people go and peck at the box, moving their heads backwards and forwards, but it is very high in terms of user acceptance, and it is pretty good in terms of its accuracy. Retina was not on the shopping list because we had not developed it when the Government were looking, but it is incredibly accurate, with very low data storage. It was quite difficult until relatively recently to grab an image, and we do not know about user acceptance of it because it is still very new, so we do not know if people are going to be happy with things shining into their eye.

But probably the best method of identification that we have is DNA. However, unfortunately its ease of acquisition is very low. If you go into Heathrow and you have to give a blood sample or a lick, you would be very unhappy unless everybody is going to have it.

So, what are we doing now? Well, we now have the so-called digital passport, which has a chip in it which is storing biometric information. Currently, these store face, fingerprints, and in some cases, iris details, but I think what the Government will eventually want is as many sub-sets of information to eliminate the possibility of fraud as soon as they feel happy with the technology. The real problem here is extracting the data. If you go through Passport Control and present your digital passport, this chip will give all your data, and you need a fingerprint or a facial recognition to determine you are the person related to in the passport. The bigger problem is: 'Who am I?' You try to go through a security checkpoint with no card to say 'Am I me?', now you are one in billions, and the thing has to search, and it is not like a usual computer searching system which can search by categories. Here, you have got a biometric, and all of us have roughly the same faces, roughly the same fingerprint data, roughly the same eyes, so searching that data format is incredibly difficult since it needs to effectively go through all of them every time.

But already we have seen sophisticated imaging systems which will grab faces. We have seen very sophisticated fingerprinting systems, and even to the extent the police now have portable systems at the roadside. So, they are here.

It would be very interesting if there were some form of DNA profiling because we all know that is absolutely the best. In fact, it may not be too far into the future here, because of the moisture in your fingerprint from which we can already determine certain elements. In fact, there has been cases where individuals have given a signal for cocaine and was arrested from his fingerprint, and it will not be too long before we can do that with DNA from a fingerprint. We can almost already do it from licking a stamp on a letter.

But, to get back to our beginning, the 'eye' in the 'eye'. Many of you will have seen the iris systems at various airports. They are not very fast because of the technology and with people's interaction with that. But we now have a system that will take your iris from twenty metres, and at thirty miles an hour, so technology moves on.

The iris, again, is a system that has been around for some time. This same Alphonse, as part of his classification, included iris colour, but it really was not until the 1990's when John Daugman developed his algorithms that we really has the potential to use iris as a good recognition system. Now, the problem with irises is that they come in different colours, and in response to light, emotion, etc. they move, and so it is very difficult to use an organ which is changing in size and shape as a biometric, but John actually developed a system. The system is based in wavelet mathematics, which takes advantage of a dimensional analysis which looks at these iris beams in a spaceless and timeless dimension. It just says, well, these have got to be roughly in these sorts of places, and I will get a pattern out of that system. It is around 520 bytes of information.

However, it had real problems, and it had problems in relation to ethnicity. In trials the failure rate for the irises of black people was twice that of that for white people. In the first attempt, the parliamentary biometric, this difference was that between 20% and 40% failure rate, but even in the second attempt it was the difference between 9% and 20%. This is the case because if you have got too much pigment it is difficult to differentiate between the iris and the pupil. So this is still a difficult dataset to collect.

In the early days of using this as an identification method, you could also cheat, by wearing coloured contact lenses. Now, we actually need the little twitch of the iris to verify that it is a live eye, so you cannot do that anymore. But you could do this at the beginning.

It is currently being used in Fallujah to try and identify the 'bad guys'. Currently this means that you have to bring them into your test centre, but with the newer systems that we have now been developing, you can do this in a car or anywhere else.

So iris is a pretty good, and it is pretty good even with a moving individual and at a distance. You do not have to touch anything to get the identification.

But let us take it a little bit further. We are all familiar with cats' eyes. Why do cats' eyes light up when we drive at night? It is because some of the light - about 5% - falls on the retina and comes out again. Everybody has taken a wedding photograph where the eyes have gone red in relation to a flash. If you look at that red image with a special camera, that red flash is because the light is coming from the retina, and what you are looking at is the head of the optic nerve and blood vessels.

This was first viewed by Helmholtz, who devised a devise which was basically a lens with a mirror, and he was able to shine light from a candle into the eye, back through his system, and view the inside of the eye. This allowed you to see the optic nerve, blood vessels and any haemorrhages that may be there. It was not really until after the Second World War that the Germans begun to develop cameras for photographing the back of the eye, instead of painting what one saw. These cameras are readily available today, with various degrees of sophistication, but I am afraid that they are rather expensive and you need someone who is quite used to doing it to grab the image.

With one of my PhD students, my idea was to develop a system that does not need drops and is very inexpensive and very portable. Eventually we came up with a scanning laser ophthalmoscope, which does effectively the same thing as the camera. It consists of two main parts: the data storage, which clips on your belt, and that is a head up display system so you can view the image. It looks perhaps looks something like a very large pair of glasses, with a wire coming off it to you're a small box that can fit in the palm of your hand. With that degree of sophistication, you get quite a good image.

Having produced this, because I wanted to give it to optometrists, I suddenly thought that although they can now get images, they will not know what the images mean. So we then set about looking for some software to analyse the images. This meant that we had to go back and look at data retrieval. If you look at the basic image of the same eye from two separate visits, perhaps only weeks apart, it can look like a different eye. This is particularly the case with the colours that the eye seems to be, which is important as that should depend on ethnicity more than anything else. So the first thing that we decided to do was to arrange a computer system which pre-processed the image and brought them all to a norm level of colour. Then we thought that we did not really need the colour, and so we began to process for elements within the image. In early versions of the task we were tried to just detect the blood vessels, but, eventually, we ended up with a system which would identify retinal pathologies, and from that, a little system would flash up: 'Okay,' green light, 'Don't understand this,' amber light, 'send them to an ophthalmologist,' red light.

But having changed the design of the camera system, it now became so inexpensive I could not see how we could make any money out of it, frankly, so I thought that there must be something else we can do with a system like this, and then I thought about the optic nerve.

When you look at people's optic nerves, they are all different. The beauty of the optic nerve is that it passes through part of the skull to get out of the orbit, so this is the part of the eye that moves least. It is very simple to arrange a camera that always images this. So that was good, and it looked as though, from looking at those images, everyone there was different. Or, so I thought, until, like Bertillon, I came across identical twins.

Now, at St Thomas' Hospital, we have the biggest collection of identical twins in the world, which gave us a great opportunity to do a lot of good research on this. What we found from this is that, even though the optic nerve is the same between identical twins, their blood vessels are totally different around the optic nerve. So what we needed next was to devise a system that extracted information from this. This way we did this was to create a barcode from the blood vessels. We had a line per blood vessel, with the width of the line proportional to the width of the blood vessel, and the space between the lines proportional to the space between the blood vessels. This gave us a wonderful system which required limited data storage. This meant that it had the great advantage of its not taking long to say which eye is the unknown eye. So this was a great system: it was very simple, required very small amounts of data storage, and it was very accurate.

But, when you go to the marketplace, you have to say 'what do you know?' and 'what am I trying to sell you?' So, the next question was whether we could combine the two? Could we devise a camera which, at the one and the same time, had a focused image of the iris and a focused image of the optic nerve? The answer was yes, and, in high security places, we can also make it bilateral, do both eyes at once. This meant that it could do both eyes - both irises and both retina - and now you have got a system which is as good as DNA.

Now, can you defeat this system? What about if I took the eye out? We know that in certain countries where thumb prints were being used as election identifiers, people cut off the thumb and put them in formalin, so you could use someone else's thumb to access.

Returning to history a moment, the Gunpowder Plot was quite a malicious plot to blow up Parliament and the primary culprits involved in the plot were caught, hung, drawn and quartered. But in the Catholic community, there were large numbers of other individuals who were caught up in this. One of them was Edward Oldcorn, and he was executed a year after the Gunpowder Plot, and whilst he was being beheaded, the story was that his right eye popped out and someone picked it up because they were very careful about making sure that people didn't get relics, but nevertheless, this we have his right eye. It was mounted in a silver casket and is today at Stoneyhurst College. But if you get to see it, it will show that eyes go off very badly and that they go off very quickly. So trying to use someone else's eye as identification is just not going to work!

©Professor John Marshall, Gresham College 2009

Part of:

This event was on Mon, 02 Nov 2009

Support Gresham

Gresham College has offered an outstanding education to the public free of charge for over 400 years. Today, Gresham College plays an important role in fostering a love of learning and a greater understanding of ourselves and the world around us. Your donation will help to widen our reach and to broaden our audience, allowing more people to benefit from a high-quality education from some of the brightest minds.

Login

Login